Key Takeaways

- The AI industry is trapped in "Innovation Theater," prioritizing grand narratives and benchmark scores over verifiable, real-world results. This creates a dangerous disconnect between hype and enterprise reality.

- Public benchmarks are poor proxies for enterprise value. Models that excel on clean, public datasets often fail when confronted with the complexity and messiness of proprietary corporate data.

- The next frontier is not a marginally better LLM, but the infrastructure that grounds AI in reality. Verifiable execution, not just probabilistic generation, is the key to unlocking enterprise value.

- Epsilla’s Agent-as-a-Service (AaaS) framework, built on a Semantic Graph, provides the necessary memory, context, and orchestration to move from impressive demos to auditable, deterministic business automation.

As a founder in the AI infrastructure space, I exist in a state of productive cognitive dissonance. On one hand, the pace of foundational model development is genuinely staggering. The capabilities of models like GPT-5, Claude 4, and Llama 4, arriving in 2026, are creating tangible new possibilities. On the other hand, I attend conferences, sit in boardrooms, and read funding announcements with a growing sense of unease. My intuition, and I suspect yours, is correct: we are deep within the throes of the AI Innovation Theater.

This is the parallel universe constructed from benchmark leaderboards, VC pitch decks, and carefully curated demos. It’s a world where founders hold up their GPU bills as proof of their commitment to changing humanity, where a single model architecture diagram is used to explain everything, and where a press release can substitute for a validated result.

This isn't a critique of AI's potential. It's a critique of a specific culture that has taken hold—a culture that rewards the art of drawing pies over the discipline of building bakeries. It's an execution-focused analysis for those of us who feel a void after watching another dazzling demo that seems to defy explanation, because it often defies reality.

The Grand Narrative and the Proxy Trap

You’ve heard the refrains. "We are using large models to redefine the paradigm of financial analysis." "Our agent surpasses human experts in zero-shot legal discovery." "We will disrupt the entire logistics industry in five years."

These statements share a common architecture: a grand narrative devoid of falsifiable predictions, concrete timelines, or acknowledged failure modes. They are designed to be impenetrable. Question the real-world validation, and you're told you don't understand the complexity of the domain. Ask for an error rate, and you're informed that traditional metrics no longer apply to this "new paradigm."

This is a defensive posture born from a fundamental flaw in the current ecosystem. The model itself has become the product, and its performance on a public benchmark has become the primary measure of its worth. But a benchmark is a proxy—a simplified, sanitized, and often compromised representation of a real-world problem. Excelling on a benchmark is not the same as solving the problem. It’s like mistaking a medical school exam score for the ability to perform surgery.

In the enterprise, this proxy trap is lethal. A model trained to perfection on a public dataset of customer service chats will implode when faced with your company’s unique lexicon of internal acronyms, legacy product names, and the unstructured conversational data buried in a dozen disconnected Salesforce instances and Zendesk tickets. The illusion of competence, so convincing in the demo, shatters upon contact with operational reality.

The most feared question in the Innovation Theater is the one that bridges this gap: "What specific, novel prediction did your model make that was then validated by a real-world, independent outcome?" When the answer devolves into a five-minute monologue on architectural novelty without a single concrete example, you can be sure you are watching a performance, not a demonstration of capability.

The Ecosystem of Incentives

This is not a problem of individual bad actors. It is a systemic issue driven by misaligned incentives. VCs need a grand vision to justify valuations, and a story about "redefining a paradigm" is more compelling than one about the slow, arduous work of data integration and workflow automation. Academic and corporate research labs need novel publications, and the fastest path is often to be the "first" to apply a new architecture to an old, well-defined dataset, nudging a benchmark score up by two points.

The result is a massive misallocation of capital, both financial and intellectual. Brilliant minds and vast computational resources are funneled into overfitting to benchmarks, while the truly hard problems of enterprise AI are neglected. These are the problems that lack clean public datasets, that require deep domain expertise, and that involve orchestrating complex, multi-step processes. They don't yield a tidy paper or a viral tweet, but they are the only problems that actually matter for creating durable business value.

This ecosystem rewards those who can tell the best story about the future, not those who are building it. It rewards the demo that runs under perfect conditions and whose failures are never documented. It creates a culture where the loudest, most optimistic voices drown out the quiet, rigorous work of those building robust, verifiable systems.

The Antidote: Grounding AI in Verifiable Reality

The path out of the Innovation Theater is not a better model. It's better infrastructure. The fundamental challenge is no longer about generating more plausible text; it's about ensuring AI actions are grounded in the verifiable truth of your organization and result in deterministic, auditable outcomes.

This is the core thesis behind Epsilla. We recognized that the value of AI in the enterprise is directly proportional to its connection to ground-truth data. An AI agent, no matter how sophisticated its reasoning engine, is useless if it's hallucinating facts or operating on a flawed understanding of your business reality.

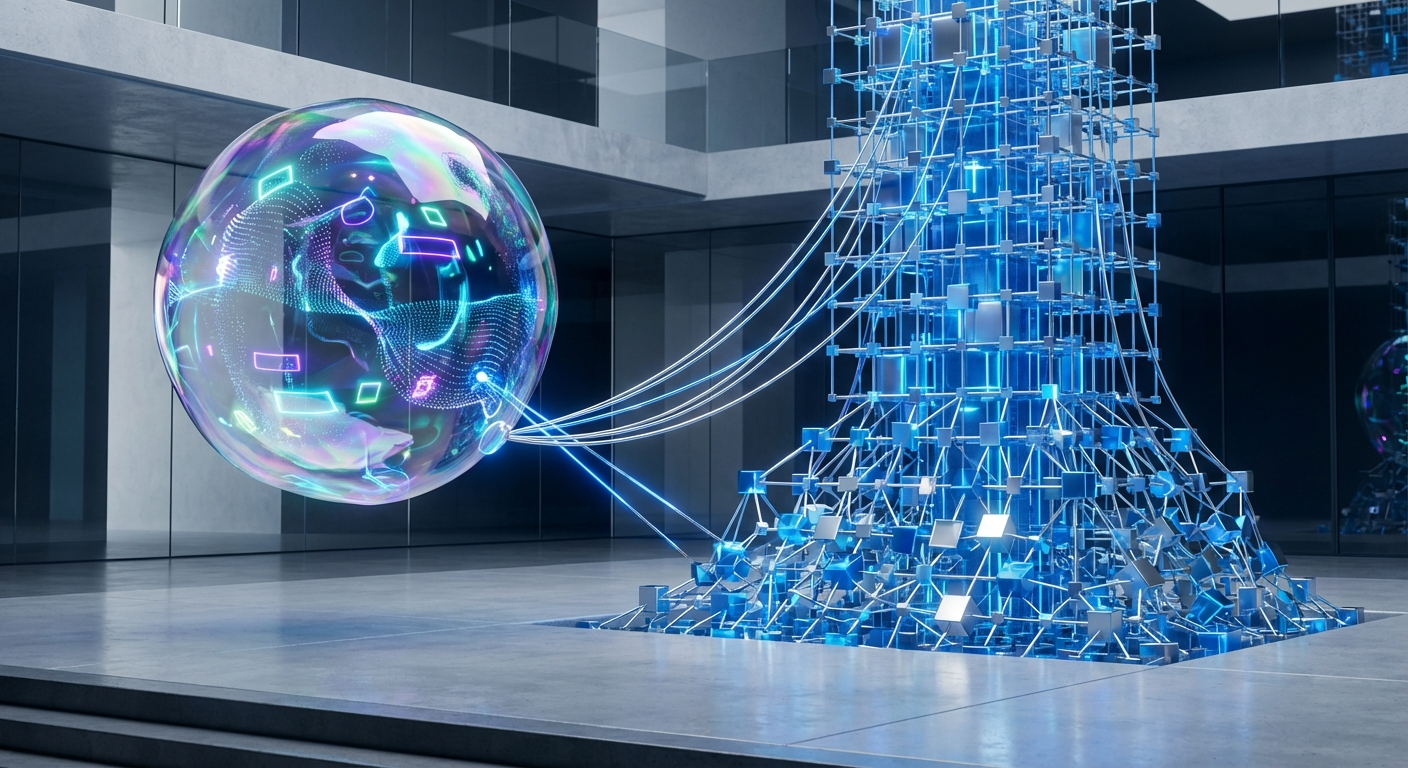

This is where the Semantic Graph becomes the foundational layer. It’s not just another vector database; it's a dynamic, interconnected map of your entire enterprise knowledge—your products, customers, processes, and their intricate relationships. It serves as the long-term memory and the contextual bedrock for AI agents. An agent without this map is functionally blind, navigating your business by statistical association alone.

Building on this foundation is our Agent-as-a-Service (AaaS) framework. This is the execution layer that translates probabilistic model outputs into deterministic business logic. Through our Model Context Protocol (MCP), we enable the orchestration of multiple specialized agents, tools, and data sources to perform complex, multi-step tasks.

Consider a task like "Analyze the impact of our recent supply chain disruption in Southeast Asia on our top 10 enterprise accounts and draft mitigation plans." An ungrounded LLM might generate a plausible but generic report based on its public training data.

An Epsilla-powered agent system executes a verifiable workflow:

- Agent 1 (Data Query): Accesses the Semantic Graph to identify the affected supply chain nodes, the specific SKUs involved, and the top 10 accounts linked to those SKUs.

- Agent 2 (Impact Analysis): Pulls real-time inventory and sales data from internal APIs for those accounts and SKUs, calculating potential revenue impact and SLA violations.

- Agent 3 (Strategy): Cross-references this data with customer contracts and communication logs (also in the graph) to formulate tailored, fact-based mitigation options for each account.

- Agent 4 (Communication): Drafts the emails and internal briefings, citing the precise data points used in the analysis.

Every step is auditable. Every piece of data is grounded in the enterprise's reality via the Semantic Graph. The outcome is not a creative essay; it is a piece of verifiable business intelligence. This is the difference between innovation theater and an innovation engine.

The future of enterprise AI belongs to those who stop chasing benchmark points and start building systems of record and execution. It’s time to demand verifiability. The next time you see a mind-blowing demo, take a breath, smile, and ask a simple question: "How would I audit the results of that process?" The answer will tell you everything you need to know.

FAQ: Grounding AI in the Enterprise

What is the "grounding problem" in enterprise AI?

The grounding problem is the challenge of ensuring AI models operate based on the specific, factual, and current reality of your business, not just on the generic patterns in their training data. Without proper grounding, AIs are prone to hallucination and making decisions that are disconnected from your operational truth.

How does a Semantic Graph solve this better than a standard vector database?

A vector database stores isolated data chunks. A Semantic Graph goes further by explicitly mapping the relationships between data points—connecting a customer to their purchase history, a product to its supply chain, and an employee to their project roles. This rich context provides a far more robust foundation for agentic reasoning.

Why is an "Agent-as-a-Service" (AaaS) framework necessary for production AI?

Real business processes are complex and multi-step, requiring more than a single call to an LLM. An AaaS framework provides the essential orchestration to coordinate multiple specialized agents, tools, and data sources, ensuring that complex tasks are executed reliably, verifiably, and with a clear audit trail for every step.