Key Takeaways

- The current software stack, built for human developers (Git, JSON, Docker), is a critical bottleneck for autonomous AI agents due to its verbosity (high token cost) and latency.

- A new "agent-native" stack is emerging, with hyper-optimized replacements for version control, runtime environments, and concurrency management, prioritizing machine efficiency over human readability.

- These new, disparate tools create an orchestration challenge. Without a shared source of truth, agent swarms risk chaotic, uncoordinated, and strategically misaligned actions.

- The solution is a central memory and governance layer. Epsilla's Semantic Graph provides the long-term, context-rich "enterprise brain" that directs these hyper-efficient agents, ensuring their actions are coherent and goal-oriented.

The discourse around artificial intelligence is undergoing a palpable phase shift. For the past several years, the focus has been model-centric: larger parameter counts, novel architectures, and marginal benchmark victories. This was a necessary, foundational stage. But we are now entering the agentic era, where the primary challenge is not merely generating a correct response, but orchestrating sequences of actions to achieve complex, multi-step goals. This transition from passive model to active agent is not an incremental upgrade. It is a paradigm break that is rendering our entire software infrastructure obsolete.

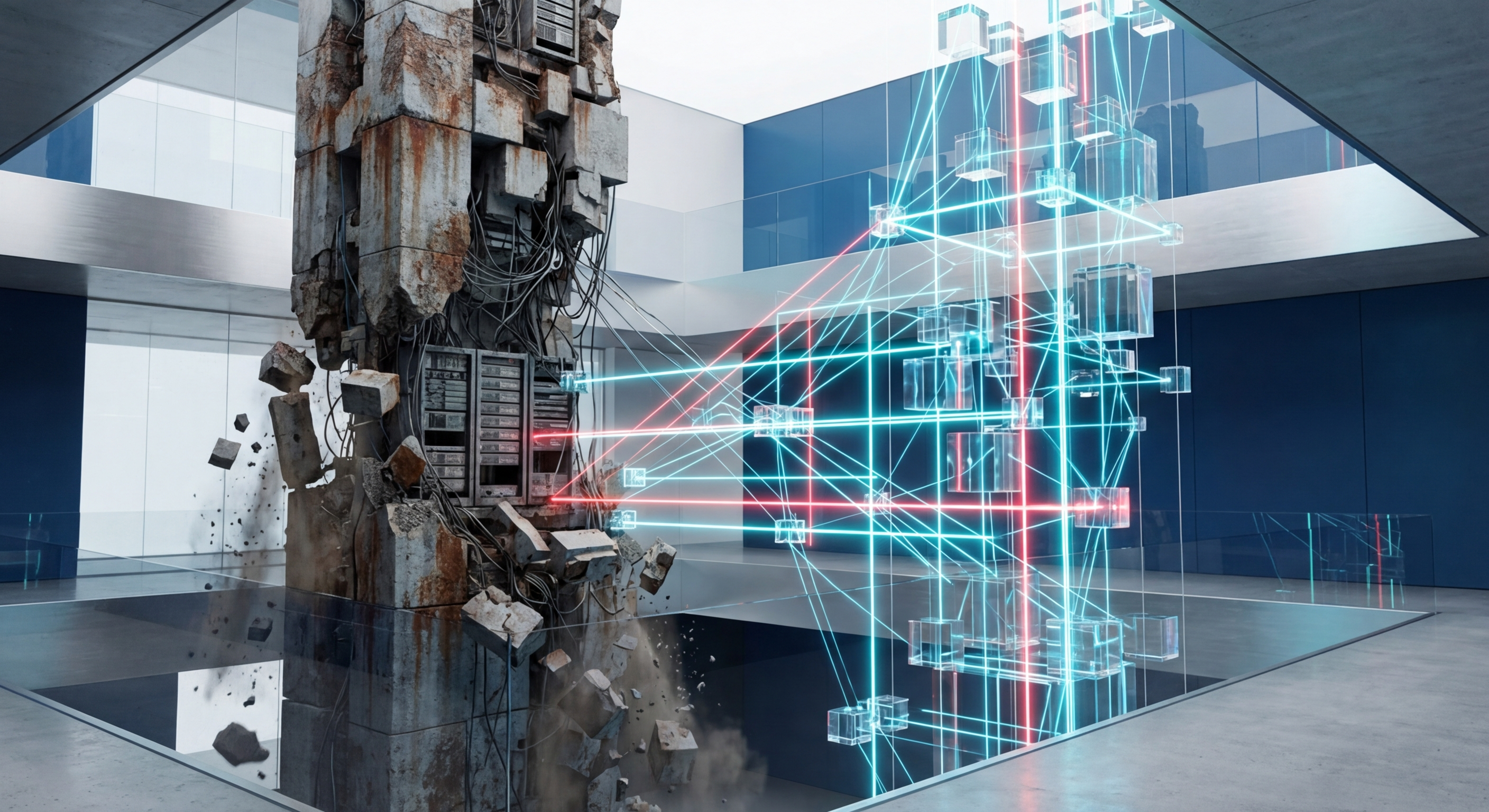

We are at the beginning of a Great Rewrite. The tools we built to facilitate human collaboration—tools we venerate for their elegance and power—are proving to be fundamentally unsuited for a workforce of autonomous agents. The evidence for this is no longer theoretical; it's appearing daily in the open-source community, where pragmatic engineers are systematically identifying and replacing the bottlenecks. What we're witnessing is the birth of an entirely new, almost alien, technology stack.

Consider the bedrock of modern software development: Git. It is a masterpiece of distributed systems design, for humans. But for a GPT-5 agent, its verbosity is a crippling tax. Every commit message, every diff formatted for human eyes, is a costly expenditure of context window tokens. This is precisely the problem that prompted the creation of Nit, a Git replacement rebuilt in Zig to slash token overhead by 71%. This isn't a minor optimization; it's a fundamental re-evaluation of how machines should communicate state changes. The design principle shifts from human-readability to token-efficiency.

This pattern repeats across the stack. The concurrency models we use are based on the assumption of a few, high-latency human actors. When you unleash a swarm of hundreds of AI agents on a single repository, traditional pull-request workflows collapse into a morass of merge conflicts. This inevitability led to projects like Wit, a system designed explicitly to stop merge conflicts when multiple agents edit the same repo. It's a direct response to the high-frequency, parallel execution model of agentic systems, a problem human-centric systems were never designed to solve.

The abstractions extend to the very languages and runtimes we use. Why are we seeing a resurgence of interest in Lisp, as demonstrated by projects like this Lisp dialect built for AI agents? Because Lisp's homoiconicity—the principle that code is data—is the ideal substrate for an agent that must dynamically generate, analyze, and modify its own logic. The syntactic overhead and rigid structures of mainstream languages are a cage for a truly autonomous system.

Even our deployment models are being dismantled. The container, once the pinnacle of portable and scalable infrastructure, is now seen as a bloated impediment. The cold starts and resource overhead of Docker are unacceptable for spawning thousands of ephemeral agents to tackle a problem. The market is responding, with solutions like Cloudflare's Dynamic Workers, which ditch containers to run AI agent code 100x faster on a lightweight, V8-isolate-based substrate. This is a move toward a far more granular, efficient, and instantaneous compute fabric.

As these agents become more powerful and autonomous, the question of control becomes paramount. Simply handing a Llama 4-powered agent an unrestricted API key is an existential risk to any enterprise. This has given rise to a critical new layer of infrastructure: the agent access control proxy. Projects like SentinelGate, an open-source Model Context Protocol (MCP) proxy, are pioneering this space. They act as a firewall, intercepting agent requests and ensuring they adhere to a strict set of permissions and operational boundaries. MCP is the nascent IAM for our new AI workforce.

Each of these innovations—Nit, Wit, agent-native Lisp, Wasm runtimes, SentinelGate—is a necessary and brilliant piece of the puzzle. They are the high-performance components of the new agentic machine. But a collection of optimized parts does not make a coherent system. A Formula 1 engine, a carbon fiber chassis, and an advanced gearbox are useless without a driver and a steering system to direct them.

This is the critical gap that most are overlooking. In our rush to build faster agentic components, we are neglecting the system that provides them with memory, context, and strategic direction. What prevents a swarm of these hyper-efficient agents from working at cross-purposes, re-solving the same problems, or optimizing for a local goal that is detrimental to the global enterprise strategy?

The answer is not simply a better database. A traditional vector database is a step in the right direction, but it's a blunt instrument. It can find semantically similar information—a form of associative memory—but it lacks a true understanding of the relationships, hierarchies, and causal links that define an enterprise. It can tell an agent what a past bug fix looks like, but not why it was implemented, which customer it impacted, and what downstream systems depend on it.

This is where we at Epsilla have focused our efforts. We understood early on that the core of a successful Agent-as-a-Service (AaaS) platform is not the agent itself, but the "brain" it connects to. We built our platform around a Semantic Graph, a living, persistent model of the enterprise's entire knowledge domain. It's more than a collection of vectors; it's a rich, interconnected graph of entities—customers, code modules, support tickets, product specifications, financial reports—and the explicit relationships between them.

When an agent operating within the Epsilla AaaS framework is tasked with a goal, its first action is to query this graph. It doesn't just get a list of documents; it gets a subgraph of contextual understanding. It sees the bug report, connected to the furious enterprise customer, linked to the specific microservice, which is owned by a particular engineering team, and has a history of similar performance issues.

Armed with this deep context, the agent can now leverage the new, hyper-optimized stack with purpose. It can use a fast Wasm runtime to execute its analysis, check out the relevant code using a token-efficient protocol like Nit, and submit a patch, with Wit managing any potential conflicts. Its access to production systems is governed by an MCP proxy like SentinelGate, with permissions dynamically granted by the Epsilla platform based on the context of the task. Crucially, as the agent works, it continuously updates the Semantic Graph with its findings, decisions, and outcomes. The enterprise brain doesn't just direct the work; it learns from it, growing smarter and more effective with every completed task.

The Great Rewrite is not optional. The economic and competitive pressures to deploy autonomous agents will force this architectural evolution. Enterprises that attempt to shoehorn agentic systems onto legacy, human-centric infrastructure will fail. They will be outmaneuvered by competitors whose agent swarms operate with an efficiency and coordination that is simply impossible with the old stack. The choice is not if you will adopt this new stack, but how. You can assemble the disparate, high-performance components yourself and hope they cohere, or you can build upon a platform designed from the ground up to provide the memory, governance, and strategic orchestration that turns a swarm of agents into a unified, intelligent workforce.

FAQ: The AI Agent Software Stack

What is the "agent-native" software stack?

An agent-native stack is a new generation of software infrastructure designed for machine-to-machine interaction. It replaces human-centric tools like Git and Docker with hyper-optimized alternatives that prioritize token efficiency, low-latency execution, and high-concurrency operations, which are critical for swarms of autonomous AI agents.

Why is a simple vector database not enough for AI agents?

Vector search finds similar data but lacks relational context. A Semantic Graph, like Epsilla's, understands the relationships between entities (e.g., customers, code, tickets), providing agents the deep, structured knowledge needed for complex, multi-step tasks and ensuring their actions are strategically aligned with enterprise goals.

What is the Model Context Protocol (MCP)?

MCP is an emerging standard for governing AI agent actions. It functions like an identity and access management (IAM) system for AI, allowing for fine-grained control over which operations an agent can perform on specific resources. This is a critical component for enterprise security and operational safety.