Key Takeaways

- DOM Bypassing is the Future of UI Rendering: Calculating text dimensions without relying on

getBoundingClientRector DOM reflows eliminates a massive performance bottleneck, allowing for 120fps virtualization of complex layouts.- Agentic Coding Solves Hard Problems, Not Just Boilerplate: The development of Pretext proves that frontier models (GPT-5, Claude 4) can navigate complex, platform-specific browser behaviors and mathematical algorithms when guided effectively.

- Enterprise Scaling Requires Structural Memory: While individual developers can manually guide an agent through a single complex component, scaling this across an enterprise requires Agent-as-a-Service architecture backed by a Semantic Graph to provide persistent, deterministic context.

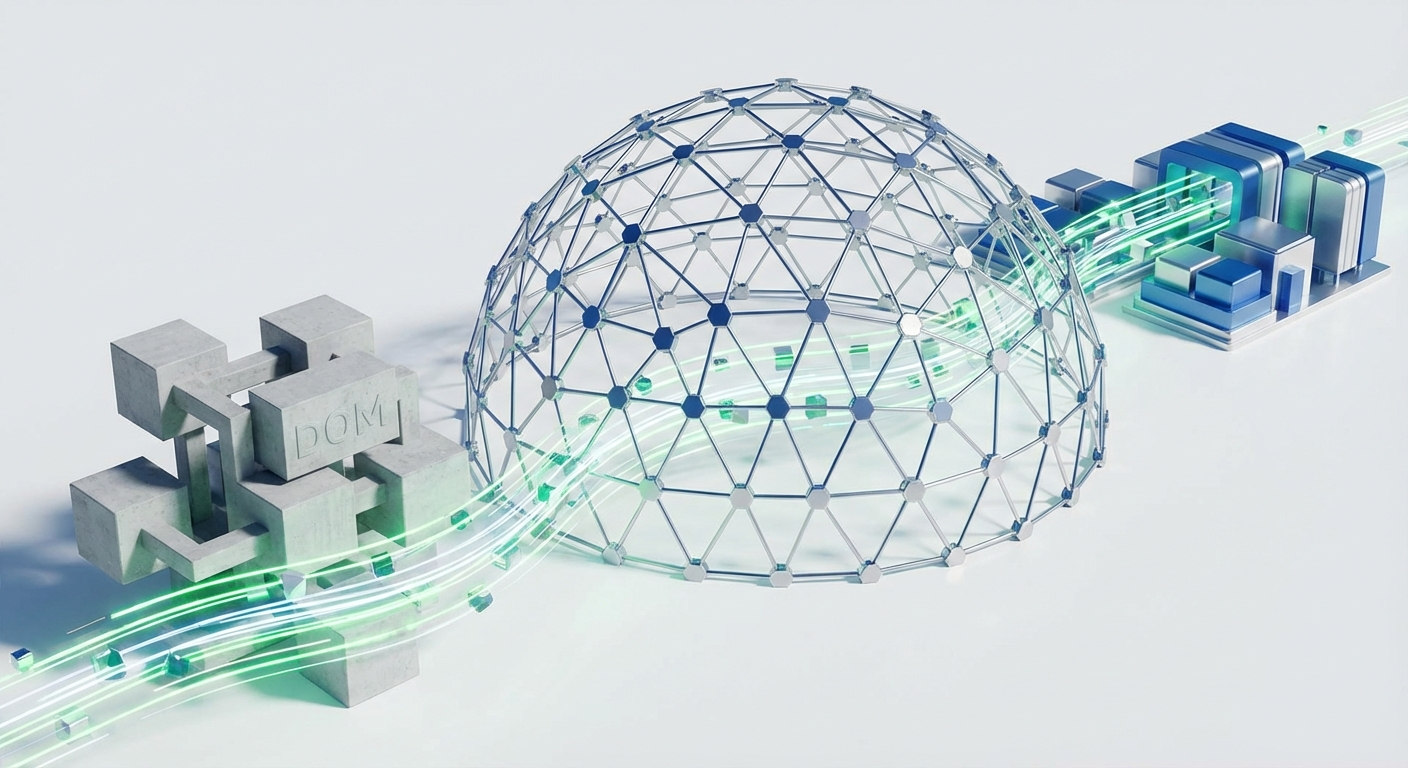

The UI engineering world has been quietly wrestling with a foundational performance ceiling for decades. Rendering complex layouts, especially virtualized lists or responsive grids, inevitably hits the DOM bottleneck. Calculating the exact dimensions of text—how it wraps, where it breaks, how much vertical space it demands—traditionally requires injecting the text into the Document Object Model (DOM), triggering a layout reflow, and calling getBoundingClientRect. It is mathematically expensive, synchronous, and kills performance at scale. Recently, Cheng Lou, a former React core member and engineer at Midjourney, released Pretext, a project that confronts this exact problem. In just two days, it amassed over 11,000 stars on GitHub, igniting discussions across Hacker News and X. Pretext is not another state management library or component framework; it is a fundamental algorithmic rewrite of how we measure text in the browser.

The mechanism behind Pretext is as elegant as it is necessary. It bypasses the DOM entirely, calculating text dimensions using a two-stage approach. First, it prepares the data using the Intl.Segmenter and the Canvas API to understand glyph dimensions and break points. Second, it performs the layout mathematically in memory. This approach allows developers to execute linear, un-cached height traversals at speeds that make 120fps scrolling of massive, dynamic text lists possible. The performance implications for complex web applications, virtualized lists, and dynamic canvases are staggering. But beyond the technical brilliance of the layout engine itself, the story of how Pretext was built offers a critical glimpse into the immediate future of software engineering.

Cheng Lou explicitly noted that Pretext was developed with extensive assistance from AI coding agents, specifically leveraging Claude Code and Codex. He didn't just use them for boilerplate generation; he used them as interactive reasoning engines. He tasked the agents with building interactive visualizers to map out the idiosyncratic, often undocumented behaviors of different browsers rendering various languages, including complex right-to-left scripts and platform-specific emojis. The agents helped measure, visualize, and iterate on the algorithm until it achieved parity with the browser's native (and slow) DOM rendering.

This is a watershed moment. It proves that we have crossed the threshold where AI agents are no longer just advanced auto-complete tools; they are capable of navigating "from the depths of hell"—as Cheng Lou described the complexity of browser text rendering—to solve deeply technical, algorithmic problems. When guided by a skilled engineer, frontier models like GPT-5, Claude 4, and Llama 4 can dissect platform discrepancies, write mathematical layout engines, and build the scaffolding required to test them.

However, as an enterprise founder, looking at this through the lens of Agent-as-a-Service (AaaS), a stark reality emerges. Cheng Lou's success relied on his own immense context window—his years of experience building React and ReasonML—which he manually fed into the agent. He acted as the architectural constraint, the memory, and the verifier. This "solo-developer-plus-agent" model is powerful, but it does not scale automatically to a 500-person engineering organization.

When you deploy coding agents across an enterprise, the primary failure mode is not a lack of intelligence; it is a lack of context and structural alignment. An agent tasked with optimizing a layout engine in a monolithic enterprise codebase will hallucinate if it does not understand the hidden dependencies, the legacy state management quirks, or the specific design system constraints of that organization. It will write brilliant, mathematically sound code that completely breaks the build.

This is exactly why we built Epsilla. If we want agents to solve problems as complex as DOM-bypassing text layout at enterprise scale, they cannot rely on a human developer to manually inject context into every prompt. They require a persistent, structural understanding of the environment they are operating in. Epsilla provides this through our Semantic Graph architecture.

In an Epsilla-powered AgentStudio deployment, the coding agent does not start from zero. The Semantic Graph maps the entire codebase, the API contracts, the architectural decisions, and the historical pull request discussions. When the agent is tasked with a complex refactor or algorithmic optimization, its reasoning is tethered to this deterministic graph. It knows exactly what dependencies its changes will touch. It understands the "why" behind the existing architecture, preventing it from regressing into hallucinated solutions that sound plausible but fail in execution.

Cheng Lou's Pretext is a beautiful demonstration of what is possible when human architectural vision pairs with agentic execution. It solves a real, painful problem in UI engineering. But the broader lesson is about leverage. The next generation of category-defining software will be built by teams who figure out how to give their agents persistent, verifiable memory. The future of engineering is not just faster coding; it is orchestrated, context-aware problem solving, and the Semantic Graph is the infrastructure that makes it possible.

FAQ: Agentic Coding and UI Performance

What is the main performance bottleneck in traditional web UI layout?

The primary bottleneck is relying on the Document Object Model (DOM) to measure element dimensions. Injecting text to measure it forces the browser to execute synchronous layout reflows (like getBoundingClientRect), which drastically degrades performance, especially in virtualized lists or complex responsive grids.

How does Pretext bypass the DOM for text measurement?

Pretext calculates text dimensions in memory without touching the DOM. It uses a two-stage process: first, it leverages the Canvas API and Intl.Segmenter to gather glyph data and word-break rules, and then it calculates the exact layout mathematically, making it exponentially faster than native DOM measurement.

Why do enterprise coding agents need a Semantic Graph?

While agents can solve complex localized problems, they hallucinate when operating in large codebases without deep context. A Semantic Graph provides persistent, deterministic memory—mapping dependencies, architectural rules, and API contracts—ensuring the agent's output aligns with the enterprise's specific structural constraints.