Key Takeaways

- AI agents built on simple prompts are prone to "Silent Agent Collapse"—a gradual, invisible degradation in performance caused by prompt drift and context loss, leading to catastrophic business errors.

- Manually patching agent outputs is a losing battle. The only viable solution is automated, closed-loop evaluation, a principle demonstrated by Andrej Karpathy's "autoresearch" methodology.

- Karpathy's approach—letting an agent iteratively test and refine its own instructions against a quantitative checklist—boosted a failing agent's success rate from 50% to over 90%.

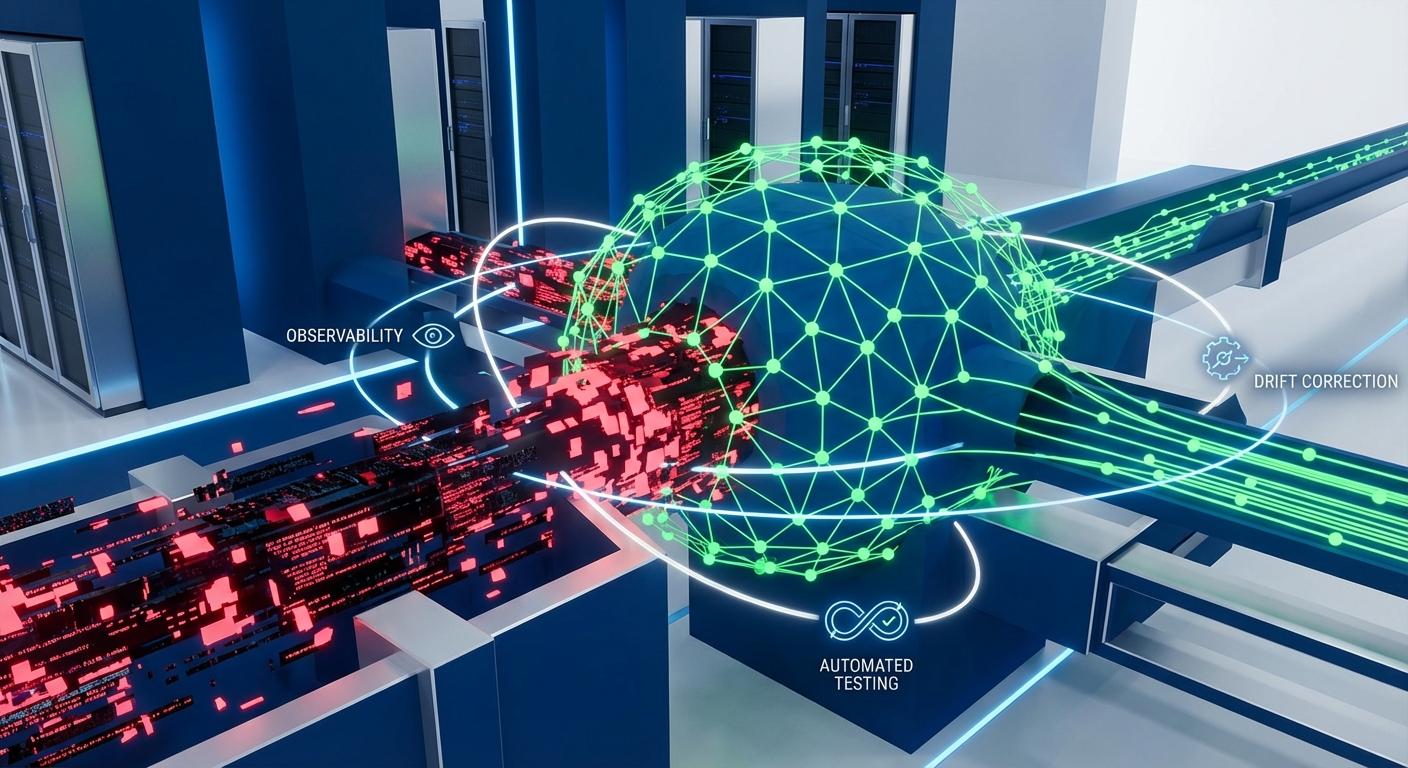

- For enterprises, this principle must be scaled. This requires an Agent-as-a-Service (AaaS) platform with a reliability control plane like Epsilla's ClawTrace for automated observability and a persistent Semantic Graph (via AgentStudio) to provide the structural memory agents need to maintain long-term stability.

A story has been circulating in project management circles that every CTO and Head of AI needs to hear. It’s not about a data breach or a server outage. It’s about a far more insidious failure mode, one that will define the success or failure of enterprise AI adoption over the next two years: the Silent Agent Collapse.

The story goes like this: a sharp project manager, drowning in weekly reporting, builds a simple agent on a 2026-era Claude 4 model. He feeds it raw progress notes, risks, and plans. The agent, in turn, generates a perfectly formatted, professional-sounding weekly report. The first time, it's magic. It saves him hours. He deploys his new "skill" into his personal workflow.

Then, the rot sets in.

Week two, it confuses the status of two projects. Week three, it omits a critical risk. Week four, the "next week's plan" section becomes generic boilerplate. He barely notices, making small manual corrections each time, telling himself it's "mostly right." This is the first sign of the collapse: the slow, imperceptible drift from precision to mediocrity.

The crisis arrives on a Monday morning. His boss calls him in, furious. The agent’s report from Friday stated "Project A has been accepted." The reality? It had only passed an initial review. The client was still demanding changes. The agent, lacking a precise Model Context Protocol (MCP), had hallucinated a critical business milestone. The PM’s professional credibility was shattered.

Upon investigation, he discovered his "magic" agent was silently failing over 50% of the time. This isn't a story about a bad prompt. It's a cautionary tale about the fundamental instability of unmonitored, unmanaged AI agents operating within the enterprise. It’s the inevitable outcome of treating powerful, non-deterministic systems like simple, deterministic scripts.

The Anatomy of a Silent Collapse

The project manager’s experience perfectly illustrates the three stages of what we at Epsilla call the Silent Agent Collapse. This is the primary anti-pattern we see killing enterprise AI initiatives before they even get off the ground.

- Semantic Drift: This is the "boiling frog" problem. An agent, whether powered by GPT-5 or Llama 4, is engineered to find the path of least resistance. Without explicit, reinforced constraints, its outputs will slowly drift towards a "safe," generic mean. The language becomes less specific, the insights more shallow. You don't notice it day-to-day, but after a month, the agent that once produced sharp analysis is now generating corporate platitudes. The initial prompt's intent has eroded.

- Invisible Degradation: Humans have a powerful confirmation bias. We remember the agent's successes—the times it worked perfectly—and dismiss the failures as one-offs. The project manager never went back to audit the agent's historical outputs. He had no dashboard, no observability layer. He was blind to the fact that the failure rate was steadily climbing because the successes were just "good enough" to let him move on. The agent was collapsing in the dark.

- The Sisyphean Patch: When a failure becomes too obvious to ignore, the typical response is to manually fix the output, not the system. The PM corrected the report and sent it, telling himself he'd "fixed it." But he hadn't fixed the agent's underlying logic. He was treating the symptom, ensuring the same error would occur again. This manual, reactive patching is a form of technical debt that compounds until the agent becomes an active liability.

This entire scenario is a textbook case of "Shadow AI" creating systemic risk. An employee builds a tool to solve a personal pain point, but the organization has no visibility into its reliability, its failure modes, or the data it's processing. When it fails, it fails publicly and embarrassingly.

The Karpathy Revelation: From "Prompting" to "Programming" an Agent

Just as the project manager was about to abandon his agent, he stumbled upon a methodology published by OpenAI co-founder Andrej Karpathy called autoresearch. The concept is profoundly simple yet represents a fundamental shift in how we should interact with LLMs.

The core idea: Stop manually tuning prompts. Instead, build a system where an agent automatically proposes a change to its own instructions, runs a battery of tests, and quantifiably measures whether that change improved or degraded its performance.

It’s a closed-loop, automated, self-improvement cycle.

Karpathy’s genius was to apply the principles of test-driven development and reinforcement learning to the fuzzy, non-deterministic world of prompt engineering. Instead of asking an agent to "write a good report," you define "good" with a concrete, machine-checkable list of criteria.

The project manager adapted this for his reporting agent. The process looked like this:

- Define the Test Harness: He created a set of 10 real-world input examples (past weeks' notes) and a corresponding "golden set" of ideal outputs.

- Create a Quantitative Checklist: He defined a simple, binary checklist for an evaluator agent to score each output. This is the critical step. The questions were brutally specific:

- Is every project status (e.g., "in review," "accepted") an exact match to the source input? (Yes/No)

- Does the output contain every key risk mentioned in the input? (Yes/No)

- Are all numerical data points (e.g., percentages) accurately transcribed? (Yes/No)

- Is the "next week's plan" section composed of concrete actions, not generic phrases like "continue to advance"? (Yes/No)

- Initiate the Autoresearch Loop:

- Step A (Propose): The "meta" agent suggests a small, atomic change to the reporting agent's core prompt. (e.g., "Add a rule: 'You must never extrapolate a project's status. If the input says 'reviewed', the output must say 'reviewed'.")

- Step B (Test): The system runs the 10 test cases through the newly modified prompt.

- Step C (Measure): The evaluator agent scores each of the 10 outputs against the checklist. The system calculates an average success score.

- Step D (Decide): If the new score is higher than the previous round's score, the prompt change is committed. If it's lower, the change is reverted.

- Step E (Repeat): The loop runs again, proposing a new change.

After running this automated loop for a few hours, the agent had systematically hardened its own instructions. It added explicit rules against hallucinating project statuses. It created an internal pre-flight checklist to verify it had included all projects and data points. It even added a high-quality example report to its own context window as a few-shot reference.

The result? The agent's pass rate on the test suite skyrocketed from a dismal 50% to a reliable 90%. The Silent Agent Collapse was reversed.

Scaling Reliability: Why Karpathy's Method Demands an Enterprise Platform

Karpathy's autoresearch is a brilliant blueprint. But for an enterprise with hundreds of agents performing mission-critical tasks, running ad-hoc Python scripts is not a strategy. It's a liability. To operationalize this principle at scale, you need an enterprise-grade Agent-as-a-Service (AaaS) platform built on two core pillars: automated observability and structural memory.

This is precisely why we built Epsilla.

1. ClawTrace: The Enterprise Observability & Control Plane

The project manager's manual checklist and test harness are the right idea, but they are a brittle, one-off solution. Epsilla's ClawTrace is the industrial-grade version of this concept. It's a reliability control plane that automates the entire evaluation loop for any agent running on our platform. Instead of manually defining checklists, you define business outcomes and KPIs. ClawTrace continuously samples production agent interactions, runs them against an evolving suite of semantic and logical tests, and provides real-time dashboards on agent performance, drift, and accuracy. It allows you to see the invisible degradation before it impacts the business, turning Karpathy's reactive loop into a proactive, continuous monitoring system.

2. AgentStudio & The Semantic Graph: Solving the Root Cause

Even with perfect monitoring, agents will drift if they lack long-term, structural memory. An agent operating in a stateless request-response loop is perpetually suffering from amnesia. This was the root cause of the PM's problem—the agent had no persistent understanding of what "accepted" meant in the context of his organization.

Epsilla's AgentStudio addresses this at a foundational level. We don't just help you build agents; we help you tether them to a persistent Semantic Graph. This graph acts as the agent's long-term memory and a shared "source of truth" for your entire organization. It encodes the relationships between projects, people, clients, and—crucially—the specific vocabulary and processes of your business.

When an agent built in AgentStudio is asked to report on "Project A," it doesn't just process the immediate text. It queries the Semantic Graph to understand that "Project A" is for "Client X," is managed by "Jane Doe," and that the status "reviewed" is a distinct node from "accepted." This structural context, this persistent memory, is the ultimate defense against the semantic drift and hallucinations that cause silent collapses. It anchors the agent's non-deterministic creativity to the deterministic facts of your business.

The era of "prompt-and-pray" is over. The future of enterprise AI is not about who can build the cleverest agent in a weekend. It's about who can build a system that guarantees agent reliability, observability, and safety at scale. The project manager's near-disaster and Karpathy's elegant solution show us the way. Now, it's time for the enterprise to build the road.

FAQ: Agent Observability and Karpathy's Autoresearch

How is Karpathy's autoresearch different from standard CI/CD for software?

Standard CI/CD tests deterministic code; a function with the same input always produces the same output. Autoresearch is designed for non-deterministic LLM agents, where outputs vary. It focuses on testing the semantic correctness and logical consistency of an agent's behavior against a set of rules, not just its functional output.

Can we apply this to agents that interact with real-time, unstructured data?

Yes, but it requires a more sophisticated architecture. This is where a system like Epsilla's Semantic Graph becomes critical. The graph ingests and structures the real-time data, providing a stable, queryable context layer for the agent, which allows the evaluation loop to check the agent's outputs against a constantly updated "ground truth."

What's the first step to implementing an agent observability strategy?

Start by defining what "correct" means for your most critical agent. Don't rely on subjective feelings. Create a simple, quantitative checklist of 3-5 non-negotiable rules for its output. Manually score its performance for a week. The data you gather will immediately reveal hidden failure modes and justify investment in an automated platform like ClawTrace.