Key Takeaways

- The rise of Agent-as-a-Service (AaaS) platforms, powered by models like GPT-5, has made automated data enrichment tasks like finding social media accounts by email trivially easy to execute, but dangerously difficult to govern.

- Ungoverned agents performing Open-Source Intelligence (OSINT) create massive compliance liabilities, risking GDPR/CCPA violations, data contamination, and severe reputational damage.

- Traditional Role-Based Access Control (RBAC) is fundamentally inadequate for agentic workflows; it controls who can initiate a task, not what the agent can see, process, or store during execution.

- Epsilla's Semantic Graph acts as the mandatory governance brain for AaaS. It enforces entity-centric access policies, provides an immutable audit trail, and ensures that the powerful capability to find social media accounts by email is a strategic asset, not a catastrophic liability.

As founders and builders, we are living through a paradigm shift. The architectural patterns of the last decade are being systematically dismantled and reassembled around a new primitive: the autonomous agent. Powered by 2026-era models like GPT-5 and Claude 4, the Agent-as-a-Service (AaaS) market is no longer a novelty; it is the new application layer. These agents can be tasked with complex, multi-step objectives, from market research to code generation. One of the most common and seemingly straightforward use cases is data enrichment—specifically, the directive to find social media accounts by email.

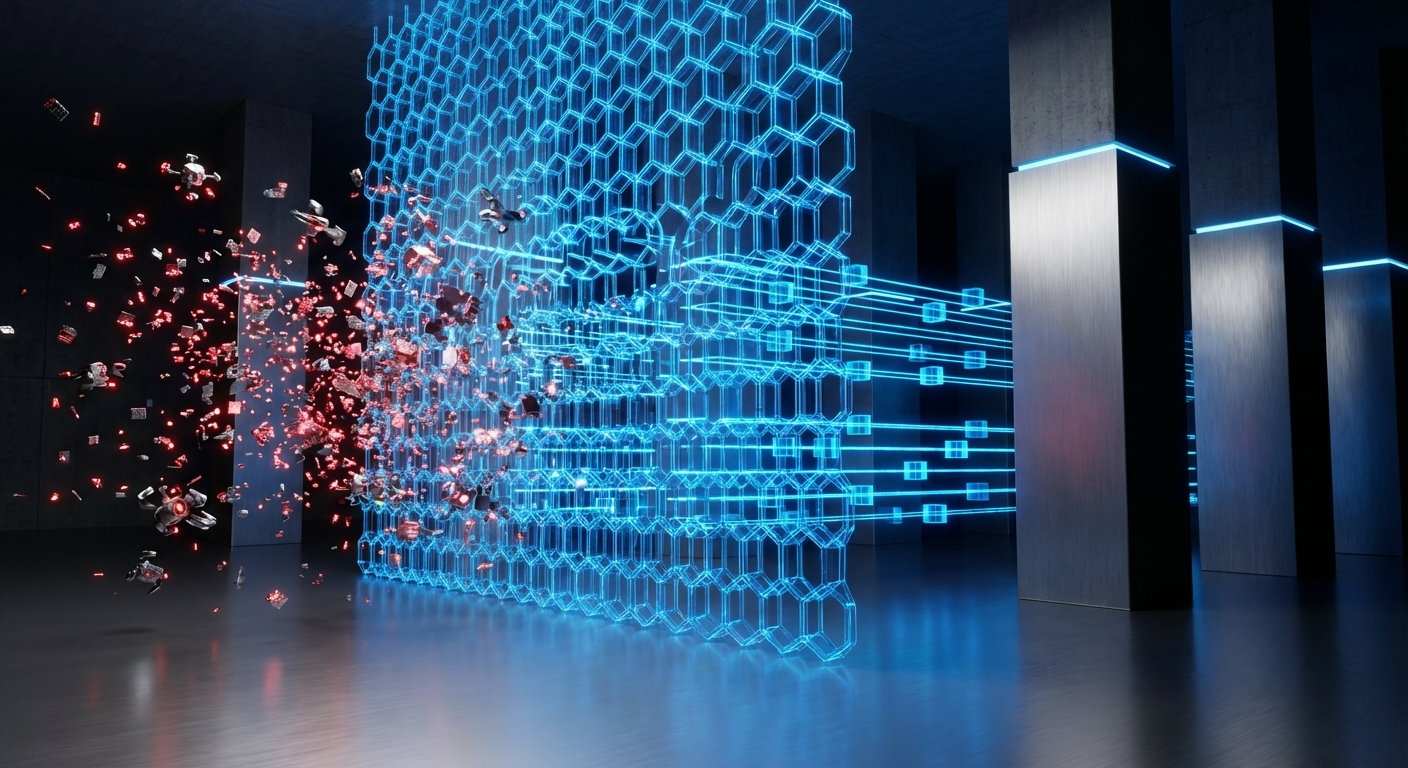

This capability is a potent tool for sales, marketing, and security teams. It promises to transform a simple email address into a rich, multi-faceted profile, providing context that can drive personalization or identify potential threats. The efficiency is seductive. But as we rush to deploy these powerful new tools, we are collectively ignoring the profound architectural flaw in most AaaS implementations: the complete absence of a governance brain.

Without a robust, stateful governance layer, tasking an agent to find social media accounts by email is like handing a new employee your master keys and telling them to "be useful." The potential for catastrophic failure is not a remote possibility; it is a mathematical certainty.

The Unseen Liability of the Ungoverned Agent

Let's dissect what actually happens when a naive, ungoverned agent receives this directive. It doesn't just check a few known APIs. It begins a relentless, automated Open-Source Intelligence (OSINT) campaign. It will scrape search engine results, crawl social media platforms, query data brokers, and explore forum archives. It will follow every digital breadcrumb, assembling a dossier of information that far exceeds the original, sanctioned intent.

This creates a minefield of risk. First, and most obviously, are the privacy and compliance violations. If the email belongs to an EU citizen, the agent's indiscriminate scraping of personal photos, political affiliations from Twitter/X, or posts on a public forum is a direct path to a GDPR nightmare. The agent doesn't understand context or consent; it only understands its objective. It cannot distinguish between a professional LinkedIn profile and a private-but-publicly-discoverable Facebook page.

Second is the risk of data contamination. The internet is rife with outdated and inaccurate information. The agent, optimized for collection rather than verification, will pull in old job titles, defunct profiles, and incorrect associations. This polluted data is then injected into your CRM or data lake, poisoning your ground truth and leading to flawed analytics and misguided outreach.

Finally, there is the operational and reputational risk. Aggressive, high-volume scraping from a single IP block is a fast track to getting blacklisted by major platforms. More insidiously, the data the agent collects becomes a high-value target. A breach of the system housing this aggregated personal data is far more damaging than a breach of the original, simple email list.

The core of the problem is that traditional security models like Role-Based Access Control (RBAC) are utterly insufficient. RBAC can determine if a sales manager is allowed to initiate an enrichment task. It has absolutely no control over what the agent does once that task is initiated. It's a binary gate, not a continuous guidance system. The agent operates in a governance vacuum, communicating with models via a Model Context Protocol (MCP) but lacking a stateful "brain" to enforce rules mid-flight.

The Semantic Graph: An Agent's Mandatory Conscience

This is not a problem that can be solved with more detailed prompts or simple if-then logic. It requires a new architectural primitive: a centralized, intelligent governance layer that understands entities, relationships, and policies. This is precisely why we built Epsilla's Semantic Graph. It is designed to be the indispensable governance brain for the agentic era.

A semantic graph is more than a database; it's a dynamic, contextual model of your data universe. When an agent is tasked to find social media accounts by email, it doesn't operate in a vacuum. Its every action is mediated by the graph.

Here’s how it works in practice:

- Entity-Centric Policy Enforcement: The graph stores not just data, but entities with types and properties (e.g., "Email," "Person," "Company"). We can attach fine-grained policies directly to these entity types. For instance, a policy can state: "For any entity of type 'EU_Prospect,' agents are only permitted to retrieve and store the

linkedin_urlandjob_titleattributes. All other discovered social profiles are to be discarded and logged as a policy-blocked action." Now, the agent's capabilities are dynamically scoped based on the nature of the data it is processing, not just the role of the user who launched it. - Immutable Audit and Data Lineage: Every query the agent makes and every piece of data it retrieves is recorded in the graph as a new node or relationship. The graph logs which agent, tasked by which user, at what time, discovered a specific piece of information (

[Agent_ID: 789] --(DISCOVERED_ON_DATE)--> [LinkedIn_Profile: xyz]). This creates a complete, queryable audit trail. If a regulator asks why you have a specific piece of data, you can show its entire lineage, from discovery to storage, along with the policies that were enforced at every step. - Preventing Redundant and Unsafe Operations: Before dispatching an agent, the AaaS platform first queries the Epsilla graph. Has this email already been processed? The graph can instantly return the cached, validated, and policy-compliant data, saving immense computational cost and avoiding redundant, noisy scraping. The graph acts as the single source of truth, preventing data drift and ensuring consistency across all agentic operations.

Let’s return to our sales team enriching 1,000 leads. Without Epsilla, they trigger 1,000 unguided OSINT missions, creating a compliance mess. With Epsilla, the workflow is transformed. The AaaS platform sends the list to the graph. The graph immediately identifies 150 emails as belonging to known EU citizens and attaches the restrictive "EU_Prospect" policy. For the others, it applies a more permissive "US_Prospect" policy. The agents are dispatched with these specific, machine-readable constraints transmitted via the Model Context Protocol. They execute their tasks within these guardrails. When the data returns, it is validated against the policy one last time before being committed to the graph.

The ability to find social media accounts by email is no longer a chaotic, high-risk activity. It is a controlled, audited, and efficient business process.

The era of agentic AI is here. The founders and enterprises that succeed will not be the ones who simply build the most powerful agents. They will be the ones who build the smartest governance systems. An agent without a governance brain is a liability. An agent guided by a semantic graph is an unparalleled strategic asset. The choice is stark, and the architecture you choose today will determine whether you are building a resilient, compliant enterprise or a ticking time bomb.

FAQ: AI Identity Resolution

What is AI identity resolution?

AI identity resolution is the use of autonomous agents and machine learning models to automatically discover, link, and enrich data about an entity (like a person or company) from disparate sources. This commonly involves tasks like using an email to find associated social media profiles, employment history, and other public data points.

Why is traditional RBAC not enough for AI agents?

Traditional Role-Based Access Control (RBAC) governs a user's permission to start a process. It does not provide the continuous, context-aware oversight needed to manage an autonomous agent's actions during its execution. An agent requires dynamic, data-centric rules, not static, user-centric permissions.

How does a semantic graph prevent data privacy violations?

A semantic graph prevents violations by attaching enforceable policies directly to data entities. For example, it can classify a contact as an "EU Citizen" and automatically instruct any agent interacting with that entity to not store personal data beyond a LinkedIn URL, thereby programmatically enforcing regulations like GDPR.