Key Takeaways

- Consumer tools like a 'cheaterbuster ai' demonstrate a public appetite for using AI to analyze digital footprints for behavioral patterns, but this is a trivial application of a profound technology.

- The real, high-stakes value lies in enterprise security, specifically in detecting corporate 'cheating': insider threats, data exfiltration, and compliance violations.

- Traditional security tools (SIEM, DLP) are rule-based and fail to understand context, leading to high false positives and missed threats. They see events, not intent.

- Epsilla's Semantic Graph acts as the essential orchestration layer, creating a dynamic map of relationships between employees, data, systems, and communications.

- Governed AI agents, operating via a Model Context Protocol (MCP), traverse this graph to identify complex, anomalous behavioral patterns that signify genuine threats, moving security from reactive alerts to proactive, contextual intelligence.

The concept of a cheaterbuster ai has captured the public imagination. The idea is simple, almost primal: leveraging AI to sift through a person's digital exhaust—social media activity, location data, online presence—to detect patterns indicative of infidelity. It's a consumer-grade application of Open-Source Intelligence (OSINT) that speaks to a fundamental human concern. But as founders and executives, we must recognize this for what it is: a fascinating but ultimately superficial use of a deeply powerful technological paradigm. The underlying principle—using AI to analyze disparate data points to infer behavior and intent—has a far more critical and valuable application not in personal relationships, but in the enterprise.

The corporate world has its own form of cheating, and the stakes are existential. It’s not about clandestine dinners; it’s about clandestine data transfers. It’s not a broken heart; it’s a broken business. Insider threats, whether malicious or accidental, account for a staggering percentage of data breaches. An engineer quietly exfiltrating proprietary code before joining a competitor, a sales director downloading a client list to a personal device, a finance employee circumventing controls—this is the corporate infidelity that can cripple a company. And our current tools for detecting it are fundamentally broken.

For years, we’ve relied on a patchwork of Data Loss Prevention (DLP) systems, Security Information and Event Management (SIEM) platforms, and User and Entity Behavior Analytics (UEBA) tools. They operate on a simple, brittle logic: rules. If an employee downloads >100 files, flag it. If a login occurs from an unrecognized IP, alert. If an email contains the keyword "confidential" and is sent externally, block it. This approach is a relic of a bygone era. It generates a deafening roar of false positives, burying security teams in meaningless alerts while sophisticated actors learn to operate just below the threshold. These systems see isolated events, not the narrative of behavior. They can tell you a user accessed a file, but they can't tell you why, or how that action connects to a Slack conversation an hour prior and a connection to a personal cloud drive an hour later.

This is where the logic of a cheaterbuster ai, elevated to an enterprise-grade system, becomes not just useful, but necessary. The challenge is not a lack of data; we are drowning in logs, emails, chat messages, and access records. The challenge is context. The solution is to build a dynamic, contextual understanding of the entire organization—a living map of who is doing what, with what data, through which systems, and in communication with whom.

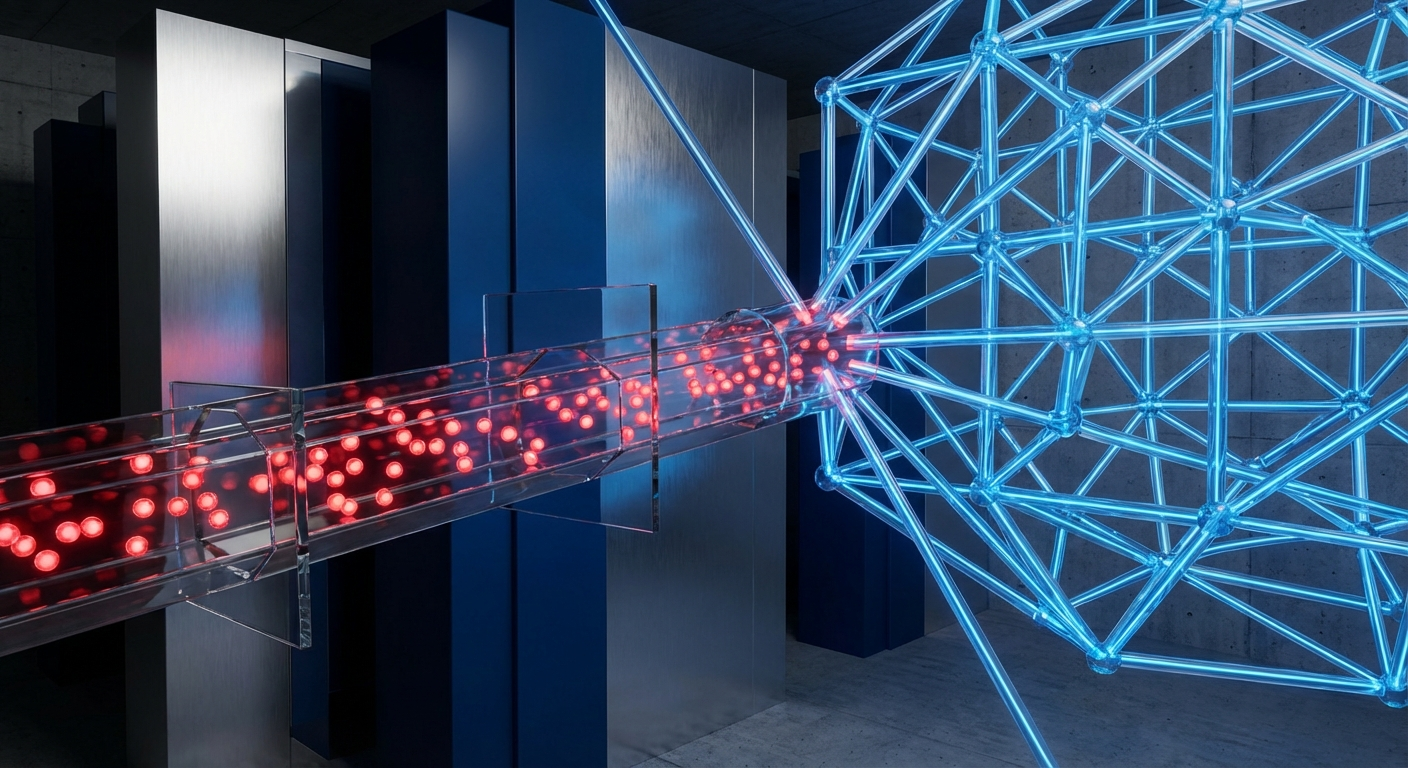

At Epsilla, we call this the Semantic Graph.

This is not a static knowledge graph. It is a multi-modal, real-time representation of the relationships between every critical entity in your organization. Employees, code repositories, customer data in Salesforce, project plans in Jira, conversations in Slack, and access logs from AWS are not isolated data points; they are nodes and edges in a vast, interconnected graph. The graph understands that an engineer named 'Sarah' is the primary committer to the 'Project-Fusion' repository, that this repository contains critical IP, and that Sarah's recent Slack conversations with 'John,' who recently left for a competitor, have shifted from technical collaboration to personal logistics.

A graph, however, is just a map. To find the subtle signals of corporate cheating, you need intelligent explorers. This is the domain of Agent-as-a-Service (AaaS), powered by next-generation models like GPT-5, Claude 4, and Llama 4. These are not general-purpose chatbots; they are specialized, governed AI agents tasked with specific missions. A "Data Exfiltration" agent's sole purpose is to traverse the Semantic Graph, looking for patterns that correlate with IP theft.

Crucially, these agents do not operate in a vacuum. They are governed by what we term the Model Context Protocol (MCP). The MCP is the strict set of rules that defines how an agent can query the graph. It ensures data privacy and security by design. For instance, an agent can be permitted to analyze the metadata and patterns of communication (e.g., "Sarah's communication frequency with external contacts has increased by 300%"), but not the content of the messages themselves, unless a higher-level threat threshold is crossed and human approval is granted. The MCP is the constitutional framework that makes enterprise-scale AI analysis both powerful and trustworthy.

Let's walk through a practical scenario. An employee is planning to leave and take a proprietary algorithm with them.

- Old Way (Rule-Based): The employee, knowing the DLP rules, downloads small chunks of code over several weeks, staying below the daily file-count threshold. They rename sensitive files and place them in a folder with a benign name. On their last day, they compress the folder and email it to their personal address. The DLP system, seeing a single, encrypted zip file, might miss it entirely or flag it too late. The SIEM logs the events, but they are lost in a sea of millions of other logs.

- The Semantic Graph & Agentic Way:

- Signal Ingestion: The Semantic Graph ingests signals from multiple sources. The agent notices the employee's commit frequency to their primary project has dropped to near zero. Simultaneously, their access to the company's internal wiki, specifically pages on legacy architecture they haven't touched in years, spikes. OSINT data shows they recently connected with three senior engineers from a direct competitor on LinkedIn.

- Graph Correlation: These are weak signals individually. But on the graph, they form a compelling cluster. The agent sees the nodes—'Employee,' 'Legacy Docs,' 'Competitor-Connections'—light up with new, correlated edges.

- Agentic Inquiry: A "Pre-Departure Risk" agent, governed by the MCP, begins to query the graph. It doesn't need to read emails. It asks questions like: "Correlate system access patterns for this user with their stated project responsibilities. Identify deviations." and "Map communication frequency between this user and recently departed employees."

- Contextual Alert: The agent detects a high-confidence pattern. It doesn't just send an alert saying "Policy Violation." It generates a concise, evidence-based narrative for the Chief Information Security Officer: "User X has ceased active project contribution, is reviewing legacy IP unrelated to their role, has established contact with competitors, and is now aggregating this data into a new, isolated directory. Confidence of pre-departure IP collection: 95%."

This is the fundamental shift. We move from a state of perpetual, low-context reactivity to one of proactive, high-context intelligence. The primitive logic of a consumer cheaterbuster ai is transformed into a sophisticated corporate immune system. It’s about understanding the subtle shifts in the digital body language of an organization to preempt threats before they materialize.

The public's fascination with a cheaterbuster ai reveals a core truth: we instinctively understand that digital actions leave a trail, and that patterns in this trail reveal intent. While the consumer application is a novelty, the enterprise application is a necessity. As founders, we are not just building products; we are building organisms. And the long-term health of that organism depends on its ability to understand itself and detect the internal behaviors that threaten its survival. The future of compliance and security lies not in building higher walls, but in cultivating deeper awareness. The Semantic Graph is the nervous system, and governed AI agents are the reflexes that protect it.

FAQ: AI Behavioral Analysis

How does this approach differ from traditional Data Loss Prevention (DLP)?

Traditional DLP relies on static, predefined rules (e.g., blocking keywords or file types). This agentic, graph-based approach focuses on context and behavior. It connects dozens of weaker, seemingly unrelated signals across different systems to identify the narrative of a threat, drastically reducing false positives and catching sophisticated attacks.

Is the AI reading all of our employees' private messages?

No. The system is built on a principle of "privacy by design" enforced by the Model Context Protocol (MCP). Agents primarily analyze metadata—who is talking to whom, when, and how often—and system access patterns. Accessing message content is a highly restricted, audited escalation path requiring human approval.

What is a Semantic Graph in the context of corporate security?

A Semantic Graph is a dynamic, real-time map of the relationships between all critical entities in your company: employees, data assets, code, cloud infrastructure, and communication channels. It's not just a database of things; it's an engine for understanding how those things interact to define normal and anomalous behavior.