Key Takeaways

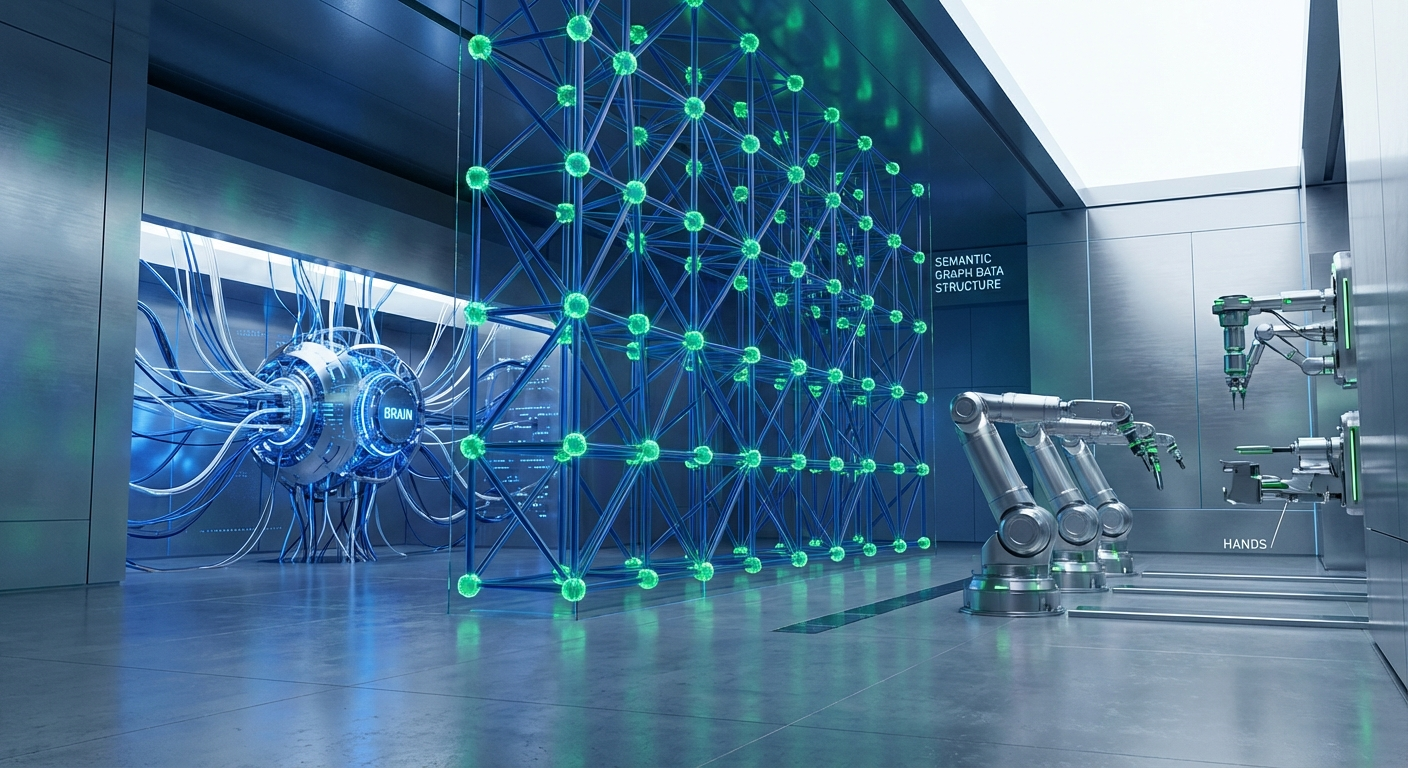

- Anthropic's "Managed Agents" platform validates a critical architectural principle: decoupling the agent's "Brain" (LLM/controller), "Hands" (execution sandbox), and "Session" (memory) is essential for security, scalability, and maintainability.

- This decoupling transforms agent infrastructure from fragile, monolithic "pets" into stateless, resilient "cattle," solving major security flaws like prompt injection leading to credential theft.

- As foundation models (e.g., Claude 4, GPT-6) become more capable, the role of the agent harness is not shrinking but evolving. The primary engineering question has shifted from "How do I control the model?" to "What can I stop doing and let the model handle?"

- The model should now manage its own tool orchestration, context window, and memory curation, using simple primitives like a code interpreter and a file system. The harness's role elevates to providing these primitives, enforcing high-level security boundaries, and optimizing performance.

- While Anthropic's "Session" log is a step forward, it's a primitive solution. True enterprise-grade reasoning requires a structured, queryable memory fabric—an Epsilla Semantic Graph—orchestrated by a model-agnostic control plane like AgentStudio and audited by ClawTrace.

Anthropic’s recent launch of Claude Managed Agents, accompanied by a refreshingly candid engineering blog, marks an inflection point in the Agent-as-a-Service (AaaS) market. It signals the end of the first era of agent development—an era defined by monolithic, fragile architectures—and the beginning of a mature, production-ready paradigm. For us at Epsilla, it’s a profound moment of validation. The core architectural breakthrough they describe—the decoupling of the Brain, the Hands, and the Session—is the very principle upon which we have built our entire enterprise agent orchestration platform.

However, their analysis also illuminates the next critical frontier. While Anthropic has masterfully solved the problem of secure, scalable execution, their concept of the "Session" as a simple event log reveals the limitations of a single-vendor approach. To build truly autonomous, multi-agent systems that can navigate enterprise complexity, we must move beyond a flat log and embrace a structured, semantic memory fabric.

The Monolithic Trap and the Decoupling Epiphany

For the past two years, the default pattern for building agents has been a dangerous anti-pattern. Developers, eager to ship, would bundle the LLM controller, the tools, the execution environment, and the session state into a single, long-running container. This monolithic design turned the infrastructure into a fragile "pet." It required careful tending, was difficult to scale, and represented a catastrophic single point of failure.

Worse, it created a security nightmare. The LLM, with access to tool-calling credentials, lived in the same environment where untrusted, model-generated code would execute. A successful prompt injection attack didn't just result in a compromised response; it could lead to the exfiltration of API keys and a complete system breach. The entire container was a blast radius.

Anthropic’s architecture systematically dismantles this trap by enforcing a clean separation of concerns:

- The Brain: The LLM itself (e.g., Claude 4) and the surrounding harness or controller logic that orchestrates the overall task. This is the cognitive core.

- The Hands: A sandboxed, ephemeral execution environment. This is where tools like

bashor a Python REPL run. Crucially, this environment is stateless and has zero access to long-lived credentials. Communication with the Brain is mediated by a secure Model Context Protocol (MCP), ensuring that sensitive tokens never enter the sandbox. - The Session: A persistent, append-only event log that serves as the agent's external memory. It records every thought, tool invocation, and observation, allowing a new, stateless "Brain" container to spin up and reconstruct the full context of a task at any moment.

This decoupling is not an incremental improvement; it is a fundamental architectural shift. It allows the execution containers (the "Hands") to be treated as stateless "cattle," not precious "pets." They can be created, destroyed, and scaled on demand without losing the agent's state, which now resides securely in the external Session log. This is the only viable path to building robust, secure, and scalable agentic systems.

The Shrinking Harness: What to Stop Doing

With the core architecture secured, Anthropic’s follow-up analysis addresses the next logical question, one that every agent developer should be asking constantly: "What can I stop doing?" As foundation models evolve at an exponential rate, components of the harness that were once critical become redundant, even counterproductive. Clinging to them is like building a custom transmission for a car when the engine now comes with a perfectly integrated, superior one.

The harness's role is shifting from low-level control to high-level enablement.

1. From Harness-Led to Model-Led Orchestration: The old paradigm assumed the harness was the master orchestrator. A tool would be called, its full output would be stuffed back into the context window, and the harness logic would decide the next step. This is incredibly inefficient. If an agent needs to analyze one column from a 10,000-row CSV, why waste tokens forcing the model to read the entire file?

The new paradigm is to trust the model. Give a powerful coding model like Claude 4 or GPT-6 a bash or REPL tool and let it write the code to orchestrate the sub-tasks. The model can call a tool, pipe the output to grep or awk to filter it, and only return the final, relevant result to its own context window. The orchestration logic moves from the harness's rigid code to the model's flexible, dynamic code generation. As Anthropic demonstrated, giving a model this self-filtering capability on the BrowseComp benchmark caused its accuracy to leap from 45.3% to 61.6%—on a web browsing task, not a coding one. This proves that code is the universal language of orchestration.

2. From Pre-loaded Prompts to On-Demand Context: System prompts have become bloated repositories of every possible instruction an agent might need. This front-loads the context window, consuming a precious budget of attention and tokens on information that is irrelevant 99% of the time.

The solution is to let the model manage its own context. Using a "Skills" pattern, where each tool or capability is described by a brief YAML frontmatter, allows the model to have a high-level overview. When it decides a specific skill is needed, it uses a simple read_file tool to pull the full documentation into its context, just in time. This is a move from static, pre-loaded context to dynamic, agent-driven context assembly.

3. From Complex RAG to Simple Memory Primitives: For long-running tasks that exceed a single context window, the default solution has been to build a complex Retrieval-Augmented Generation (RAG) pipeline around the model. But this is another case of the harness doing work the model is increasingly capable of doing itself.

Anthropic’s research shows that giving the model simple, powerful primitives is far more effective. A memory folder where the agent can read and write files becomes a powerful tool for long-term persistence. The key insight is that the quality of the memory depends on the intelligence of the model. A less capable model might just dump raw logs. As their Pokémon example brilliantly illustrates, Claude 3.5 Sonnet filled its folder with repetitive notes about NPCs. Claude 4 Opus, given the exact same tool, created a structured directory, tracked its progress, and even created a learnings.md file to reflect on its own failures. The harness provides the file system; the model provides the intelligence.

The New Frontier: Where the Harness Must Evolve

This philosophy of "doing less" does not mean the harness becomes obsolete. Its responsibilities are simply moving up the stack, focusing on two critical areas that the model cannot and should not handle alone: performance optimization and security boundaries.

Caching Strategy: With stateless message-based APIs, every turn in a conversation requires resubmitting the entire history. Aggressive caching is therefore not a feature; it's an economic necessity. The harness must be intelligently designed to maximize cache hit rates by appending new messages rather than modifying old ones, managing tool definitions carefully, and placing dynamic content at the end of prompts.

Declarative Boundaries: If an agent's only tool is bash, then rm -rf / and ls -l look identical to the harness—they are both just strings of code. This is untenable from a security and governance perspective. The harness’s new, critical role is to define a clear boundary between safe, exploratory actions and high-stakes, irreversible ones.

Actions that require user confirmation, interact with sensitive external APIs, or modify critical state should be pulled out of the bash sandbox and defined as distinct, declarative tools. This allows the harness to intercept the call, apply security policies, pop a confirmation dialog for the user, or generate a detailed audit log. This is the control plane where enterprise-grade governance is enforced. The decision of what to elevate from a bash command to a declarative tool is a continuous process of risk assessment, and it is one of the most important design tasks for the modern agent architect.

Beyond the Log: Why Anthropic's "Session" is Not Enough

This brings us to the logical conclusion of Anthropic's architecture, and where the Epsilla vision begins. Their "Session"—an append-only log of events—is a brilliant solution for achieving statelessness and providing basic memory. But for the complex, multi-agent systems that enterprises need, a flat log is fundamentally insufficient. It’s a chronological record, but it has no understanding of the relationships between the events it records. It’s the difference between a ship's log and a navigational chart.

An enterprise agent doesn't just need to know what happened; it needs to understand why. It needs to query the relationships between customers, contracts, support tickets, and product deployments. It needs to reason over a knowledge base that evolves and connects disparate pieces of information.

This is why we built the Epsilla Semantic Graph. It is the next-generation "Session" layer—a living, queryable memory fabric that captures not just events, but the entities and relationships that connect them. When one agent updates a customer record, other agents subscribed to that entity are instantly aware of the change. The graph becomes a shared, structured "external brain" that enables true multi-agent coordination and sophisticated reasoning that is impossible with a simple log file.

This superior memory fabric is managed by AgentStudio, our model-agnostic control plane. While Anthropic's platform is, by design, centered on Claude, enterprises cannot afford to be locked into a single model provider. The pace of innovation is too rapid. AgentStudio allows you to orchestrate a heterogeneous fleet of agents—using a future GPT-6 for its creative reasoning, Claude 4 for its coding prowess, and an open-source Llama 4 for fine-tuned internal data analysis. All these agents collaborate through the central Semantic Graph, using the best tool for every job.

Finally, this entire system is observed and secured by ClawTrace. It provides the deep, causal audit trail that enterprises require, tracing every interaction between agents, tools, and the Semantic Graph. It is the logical extension of Anthropic's declarative boundaries, providing a comprehensive governance layer for complex, autonomous systems.

Anthropic has laid out the foundational grammar for the next era of AaaS. Decouple the Brain and the Hands. Let the model do what it does best. But to write the epic poems of enterprise automation, we must evolve the Session from a simple log into a rich, semantic graph. That is the next frontier, and it's the future we are building at Epsilla.

FAQ: Decoupled Agent Architecture

What is the core benefit of a decoupled agent architecture?

The primary benefits are security, scalability, and maintainability. By separating the "Brain" (logic), "Hands" (execution), and "Session" (state), you can create stateless, sandboxed execution environments that prevent security breaches and allow for resilient, on-demand scaling.

Why is a simple session log insufficient for enterprise agents?

A flat log lacks semantic structure. It records a sequence of events but doesn't understand the relationships between them. This makes complex queries, causal reasoning, and effective multi-agent coordination nearly impossible, which is why a structured semantic graph is superior.

How does a model-agnostic control plane like AgentStudio provide a competitive advantage?

It prevents vendor lock-in and future-proofs your architecture. You can dynamically orchestrate the best-in-class model (e.g., from OpenAI, Anthropic, Google, or open-source) for any given sub-task, ensuring optimal performance and cost-efficiency without being tied to a single ecosystem.