Key Takeaways

- Building production-grade AI agents has been historically blocked by the "infrastructure tax"—months of undifferentiated work on sandboxing, state management, and orchestration loops before any real business value can be delivered.

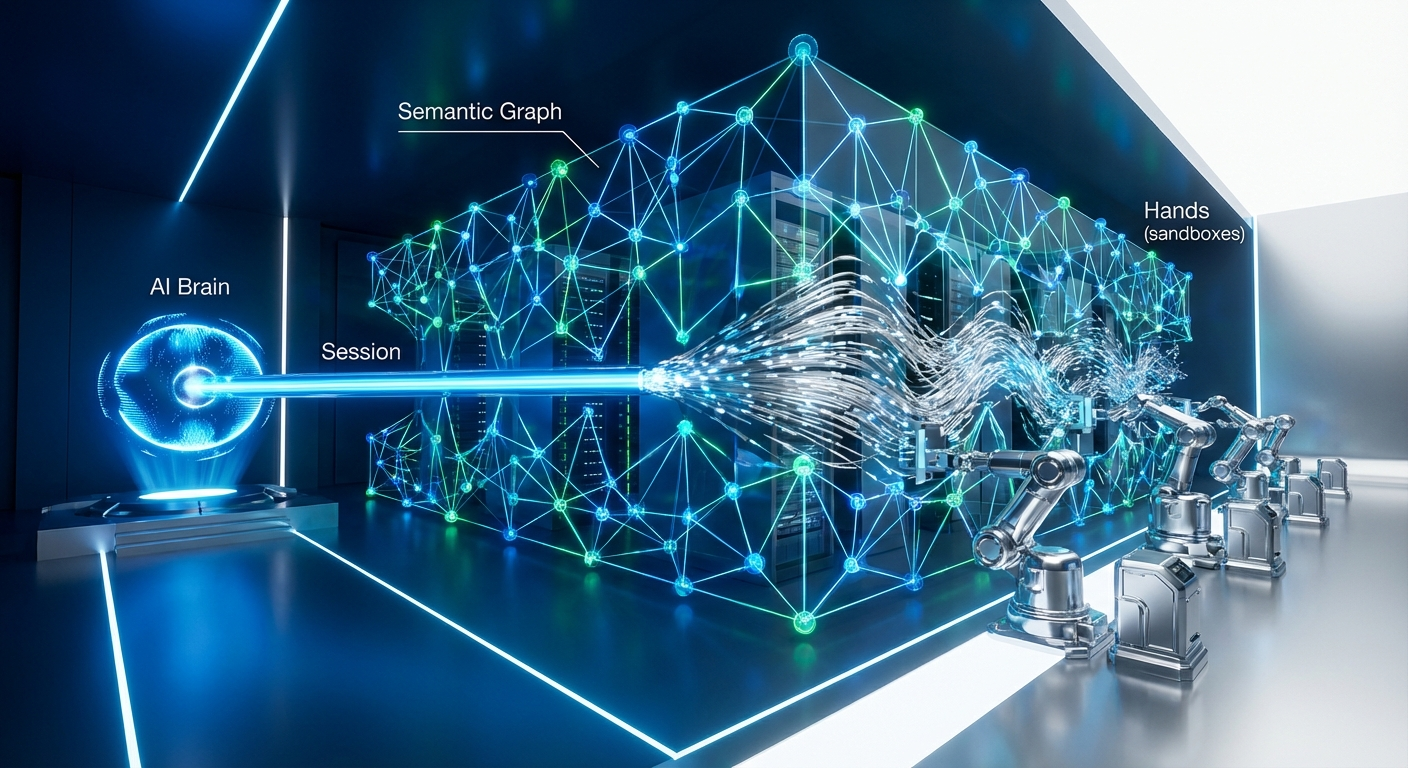

- Anthropic's new Managed Agents platform fundamentally changes the game by commoditizing this infrastructure. Its "Brain, Hands, Session" model decouples the LLM from the execution environment and state, compressing development from months to days.

- We experienced this firsthand building "Tracy," our observability agent for ClawTrace. We bypassed the entire custom infrastructure build, going from concept to a robust, cloud-hosted agent in under a week by using the managed platform as our backend.

- However, this commoditization doesn't eliminate all problems; it shifts them. The new frontier of pain is in application-level challenges: data access performance, schema brittleness, data-level security, and cost control—issues the underlying infrastructure can't solve.

- The ultimate solution is a dedicated control plane. Platforms like Epsilla's AgentStudio provide the governance and observability layer, while a Semantic Graph provides the deterministic, high-performance data interface required for enterprise-grade agent execution.

For the last two years, the AI industry has been trapped in a frustrating cycle. We've seen thousands of impressive agent demos, but vanishingly few have made it into production. The chasm between a clever proof-of-concept and a secure, scalable, and reliable enterprise system has been immense. The reason is simple: the "agent infrastructure tax."

Before you could even begin to solve a real business problem, you were on the hook for months of grueling, undifferentiated engineering. You had to build secure execution sandboxes, typically wrestling with Docker, Firecracker, or gVisor to prevent an agent from escaping its confines and wreaking havoc. You had to architect complex state management systems, stitching together vector databases and Redis caches just to give your agent a semblance of memory beyond its immediate context window. You had to hand-roll brittle orchestration logic—endless ReAct prompt loops, JSON parsing, and retry mechanisms—that would shatter the moment an LLM deviated from the happy path.

This tax was killing innovation. Teams were spending 90% of their time on plumbing and only 10% on the unique logic that actually delivered value. At Epsilla, we lived this pain. Our initial attempts at building "Tracy," our in-house observability agent for ClawTrace, were mired in this exact swamp. We were staring down a multi-quarter roadmap just to build the foundational scaffolding.

Then, Anthropic released their Claude Managed Agents platform. It wasn't just another API endpoint; it was a fundamental paradigm shift that commoditizes the entire infrastructure layer. By cleanly decoupling the agent's "Brain," "Hands," and "Session," Anthropic has effectively zeroed out the infrastructure tax. This shift allowed us to scrap our complex custom architecture and build a production-ready Tracy agent in a matter of days.

This is the story of that transition, the new class of problems we uncovered, and why the future of agent development isn't about the infrastructure, but about the control plane and semantic data layers that sit on top.

The Paradigm Shift: Deconstructing the "Brain, Hands, Session" Model

To understand the impact, you have to understand the architecture. Anthropic's platform isn't magic; it's a brilliantly executed abstraction over the three core components of any agent system.

First is the Brain. This is the component we're all familiar with: the large language model itself (in this case, a Claude 4-series model) that serves as the reasoning engine. It's the controller, the orchestrator, the component that decides what to do next based on a goal and the current context. In the old world, the Brain was inextricably tangled with everything else.

The second, and arguably most revolutionary, component is the Hands. These are ephemeral, securely sandboxed execution environments. When the Brain decides to run a piece of code or execute a command, the platform dynamically provisions a sterile, stateless environment to do the work and then tears it down. For developers, this is a massive unlock. The entire nightmare of container security, dependency management, and preventing cross-session contamination is simply handled. It abstracts away the most dangerous and complex part of agent infrastructure.

Finally, there's the Session. This is a persistent, append-only event log that functions as the agent's external memory. Every thought, every tool call, every observation is recorded. This elegantly solves the state management problem. Instead of us manually stuffing conversation history into a context window or building a complex RAG pipeline, we simply reference a session_id. The platform ensures the Brain has the necessary long-term context to complete multi-step tasks, recover from interruptions, and maintain state over hours or even days.

This tripartite architecture—Brain, Hands, Session—effectively turns agent infrastructure into a utility, much like cloud computing turned servers into a utility two decades ago. You no longer build the power plant; you just plug into the grid.

From Months to Days: Rebuilding Tracy on Managed Agents

Our experience with Tracy provides a stark before-and-after picture. The goal for Tracy was to create an AI agent embedded within our ClawTrace observability platform. It needed to be able to investigate complex system trajectories, diagnose root causes, and present findings to engineers—all by interacting with our telemetry data.

Our initial V1 architecture was a classic example of the infrastructure tax. We had a FastAPI backend managing a custom ReAct loop, a Redis instance for session caching, and a planned-out but not-yet-built Docker-based sandboxing service for running Python code. It was fragile, complex, and months away from being production-ready.

When the managed-agents-2026-04-01 beta was released, we threw that entire architecture in the trash.

Our new architecture is radically simpler. The FastAPI backend is now little more than a thin proxy. When a user sends a message to Tracy, our routers/tracy.py endpoint simply forwards it to the Anthropic session API, passing along the session_id. Anthropic's managed infrastructure takes over completely, handling the reasoning cycle, invoking the tools we've defined, executing code in its native sandboxed "Hands," and persisting the state in the "Session." We stream the results back to our frontend using Server-Sent Events.

The entire custom orchestration loop, the Redis cache, the planned Docker service—all of it vanished. We went from a multi-month infrastructure project to a few hundred lines of Python in a weekend. Our backend's only remaining responsibilities are to persist the final conversation history to PostgreSQL for user records and to inject critical, real-time context—like the tenant_id and trace_id from the user's current view—into the initial prompt.

This is what "months to days" looks like in practice. It's the direct result of outsourcing the undifferentiated heavy lifting of infrastructure to a dedicated platform.

The New Pain: Where Managed Infrastructure Fails

This newfound velocity is exhilarating, but it's not a panacea. By abstracting away the infrastructure problem, the Managed Agents platform revealed a new, more subtle class of application-level challenges. The platform gives the agent a place to think (Brain), a safe place to work (Hands), and a way to remember (Session), but it offers no opinion on what the agent should be working on or how it should interact with your proprietary systems.

This is where we hit the next wall, and it's defined by four key pain points:

- Performance of Data Access: Tracy's primary tool is the ability to query our vast telemetry data lake via Cypher. Our data lives in Delta Lake, and we use a high-performance graph query engine called PuppyGraph to provide a graph interface. While PuppyGraph itself is incredibly fast and highly optimized for complex multi-hop graph traversals over data lakes, the underlying LLM often lacks the contextual awareness to write efficient queries out of the gate. Without understanding the specific data topology, an unguided LLM can generate logically sound but poorly optimized Cypher queries that strain the system. We had to implement heavy-handed prompt instructions and strict schema boundaries to ensure the agent leverages PuppyGraph's capabilities efficiently, rather than generating brute-force table scans.

- Schema & Tool Brittleness: The LLM is a probabilistic, not a deterministic, system. Our PuppyGraph layer has a specific quirk where you must use the

elementId(v)function to get a vertex's ID instead of the standardv.idnotation. Despite instructing Tracy on this rule in its system prompt, it would occasionally "forget" and generate a failing query. This single point of failure would break the execution loop, forcing retries and degrading the user experience. - Strict Data Security and Scoping: While Anthropic's "Hands" provide infrastructure-level security (the agent can't break out of its sandbox), they don't provide data-level security. The agent could easily forget to include a

WHERE t.tenant_id = '...'clause in its query, potentially exposing cross-tenant data. We couldn't trust the LLM with this critical responsibility. Our solution was to build a Model Context Protocol (MCP) server that acts as a middleman, validating every single query from the agent to ensure it's properly scoped before execution. This is a critical security layer that lives outside the managed platform. - Runaway Token Costs: The managed "Session" is a double-edged sword. Its long-term memory is powerful, but it can lead to massive context windows and exorbitant costs on long-running tasks. The platform provides the mechanism, but not the governance. We had to build our own granular consumption billing and monitoring on top to differentiate between new input tokens and cached tokens from the session history, giving us the financial controls necessary to deploy this at scale.

These problems are not theoretical. They are the day-to-day reality of building with Agent-as-a-Service (AaaS) platforms. The battle has moved up the stack from raw infrastructure to control, governance, and data interaction.

The Epsilla Thesis: The Control Plane and the Semantic Graph

The commoditization of the agent infrastructure layer is the single most important trend in AI development for 2026. The winners in this new era will not be those who build a better sandbox, but those who build the best control plane for managing fleets of agents running on these commodity platforms.

This is the core thesis behind Epsilla. The challenges we faced with Tracy—performance, brittleness, security, and cost—are universal. They are the new "great filters" that separate production-grade agents from demos.

Our solution is twofold.

First, AgentStudio is the dedicated control plane. It's the missing layer of governance for managed agents. It's where you define, test, and enforce the rules that tame the probabilistic nature of the LLM Brain. It's where our MCP server's security validation logic lives. It's where you set budgets, monitor performance, and analyze token consumption. It provides the observability and determinism that enterprise applications demand but AaaS platforms, by design, do not provide.

Second, the solution to the data access problem—the slow queries and brittle schema interactions—is the Semantic Graph. Instead of forcing an LLM to learn the quirks of your underlying database and write complex, low-level queries, you expose a high-level, semantic layer. The agent no longer writes Cypher; it makes a deterministic API call to the graph, asking a business question like find_root_cause(trace_id). The Semantic Graph, which has a deep understanding of your data's structure and performance characteristics, is then responsible for translating that request into an optimized, secure, and performant query.

This architecture moves the complexity out of the probabilistic LLM and into a deterministic software layer that you control. The agent's job is simplified from "code writer" to "tool user," dramatically increasing reliability and performance.

The future is clear. Anthropic, OpenAI, and others will provide the engines—the powerful, commoditized AaaS platforms. But to build a car, you need a chassis, a steering wheel, a dashboard, and a braking system. That is the role of the control plane and the semantic graph. They are the essential components that turn a raw engine into a vehicle capable of navigating the complex terrain of the enterprise. We didn't just build Tracy; we uncovered the blueprint for the next generation of enterprise AI.

FAQ: Managed Agents and Enterprise Architecture

What's the real difference between a managed agent platform and just using a function-calling API?

The key difference is infrastructure abstraction. A function-calling API gives you a hook, but you are still responsible for building the execution sandbox, managing the state, and orchestrating the multi-step logic loop. A managed platform like Anthropic's provides all of that as a built-in, cloud-hosted service, eliminating months of engineering effort.

If the agent infrastructure is commoditized, where is the competitive moat for businesses?

The moat moves up the stack. It's no longer about who can build the best agent infrastructure. It's about your proprietary data, the unique tools you connect to the agent, and, most importantly, the control plane you use to ensure reliable, secure, and cost-effective execution on top of the commodity AaaS layer.

How does a Semantic Graph prevent the agent from making mistakes?

It reduces the surface area for error by raising the level of abstraction. Instead of asking a probabilistic LLM to write brittle, low-level database queries, the agent calls a high-level, deterministic API. The graph handles the complex implementation details, making the agent's task simpler and its outcomes far more predictable and reliable.