For years, enterprise AI has been constrained by a fundamental architectural flaw: data silos. Not just silos in our databases, but silos in our semantic understanding. Our text models lived in one vector space, our image models in another, and video or audio were often relegated to metadata-only search or complex, brittle pipelines. Building a truly comprehensive Retrieval-Augmented Generation (RAG) system required a team of engineers to stitch together disparate models, manage multiple vector indexes, and pray the cross-modal alignment held up under production load.

Last night, Google fundamentally changed the equation. The release of Gemini Embedding 2, the first native multimodal embedding model built on the Gemini architecture, isn't just an incremental update. It's a paradigm shift that signals the end of the siloed approach and the true beginning of enterprise-grade, native multimodal RAG.

Available now in public preview via the Gemini API and Vertex AI, this model eliminates the need for complex data pipelines by mapping text, images, video, and even audio into a single, unified semantic embedding space. Let's break down the technical specifications and why this is a tectonic shift for AI architects and developers.

A Unified Vector Space: The Architectural Revolution

At its core, Gemini Embedding 2 allows developers to treat all data modalities as first-class citizens within the same vector database. Instead of generating text vectors with one model and image vectors with another, then attempting to align them, Gemini Embedding 2 projects the semantic essence of different media directly into the same high-dimensional space.

This architectural simplification is profound. Tasks that were previously complex, multi-stage operations can now be executed within a unified framework:

- Retrieval-Augmented Generation (RAG): Query with text, retrieve relevant video clips, images, and document snippets to ground an LLM's response.

- Semantic Search: Search for a concept and get back all related assets, regardless of their original format.

- Recommendation Engines: Recommend a product based on its image, description, and user review videos simultaneously.

- Data Clustering & Anomaly Detection: Identify thematic clusters across your entire unstructured dataset, from audio logs to marketing images.

Technical Specifications: Under the Hood

Gemini Embedding 2 inherits the powerful multimodal capabilities of the Gemini family and offers well-defined support for a wide range of inputs:

- Text: An expanded context window supporting up to 8192 input tokens.

- Images: Processing for up to 6 images per request (PNG and JPEG).

- Video: Ingestion of up to 120-second video clips (MP4 and MOV).

- Audio: Native audio ingestion and embedding without requiring an intermediate Automatic Speech Recognition (ASR) step to transcribe it to text. This is a game-changer for directly understanding sentiment, tone, and non-verbal cues.

- Documents: Direct embedding of PDF files up to 6 pages long.

Crucially, the model also supports interleaved input. This means you can pass multiple modalities within the same API call, such as an "image + text description" or a "video + text prompt." The resulting vector captures the complex semantic relationship between these modalities, enabling a far deeper level of contextual understanding. In an e-commerce context, this is the difference between understanding a product photo and its description separately, versus understanding them as a single, cohesive entity.

Matryoshka Representation Learning (MRL): Performance Meets Practicality

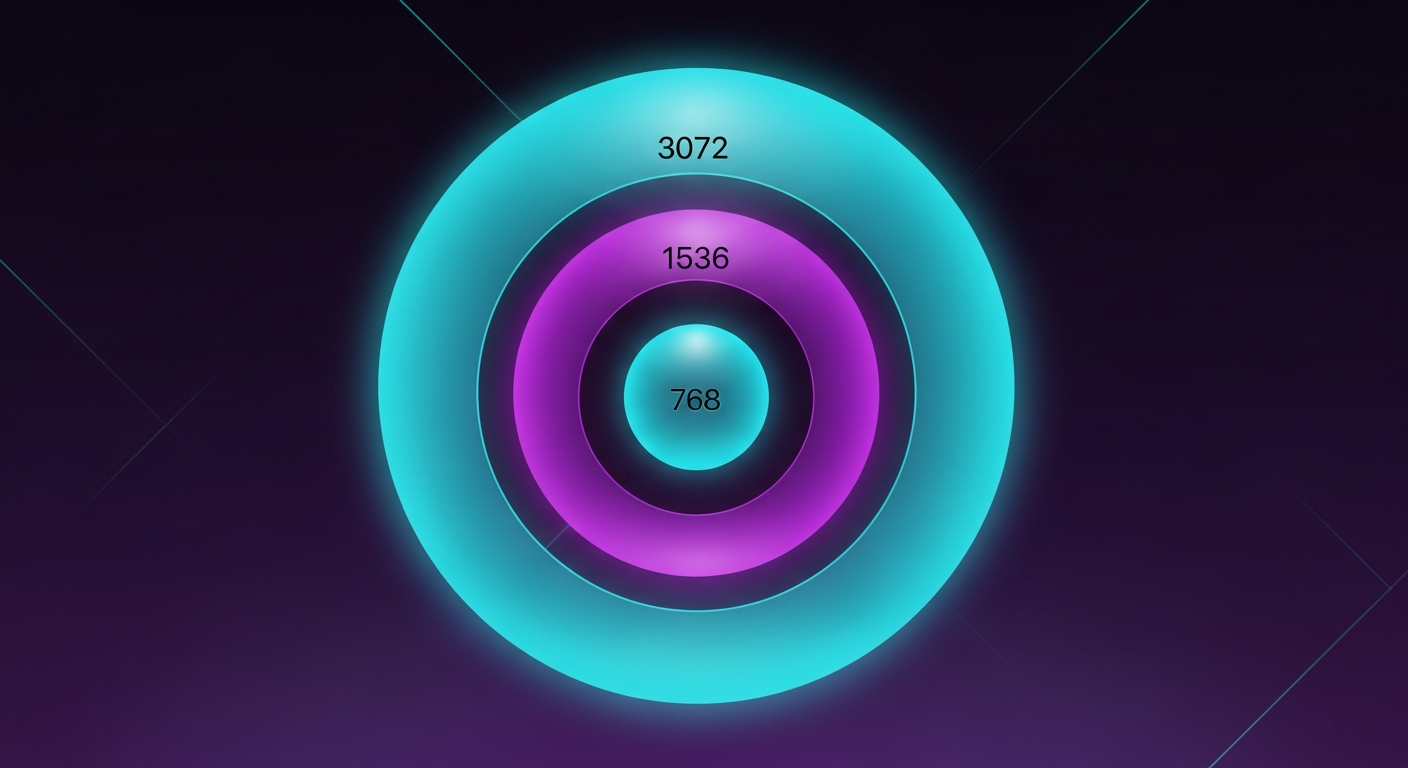

On a vector representation level, Gemini Embedding 2 implements a brilliant technique called Matryoshka Representation Learning (MRL). This method structures the embedding vector like a Russian nesting doll, allowing for dynamic dimensionality compression while preserving semantic integrity.

By default, the model outputs a high-fidelity 3072-dimension vector. However, for applications where storage costs and retrieval latency are critical constraints, developers can simply truncate this vector to a smaller, pre-trained size. The officially supported and optimized dimensions are 3072, 1536, and 768. This provides engineering teams with a crucial lever to balance performance, cost, and accuracy without needing to retrain or re-index their entire dataset.

Benchmark Dominance: The SOTA for Multimodal RAG

Google hasn't just simplified the architecture; they've raised the bar on performance. Across multiple rigorous benchmarks, Gemini Embedding 2 demonstrates clear superiority, particularly in complex, cross-modal tasks.

MTEB (Massive Text Embedding Benchmark) - Multilingual:

In text-to-text semantic matching across languages, Gemini Embedding 2 takes the lead:

- Gemini Embedding 2: 69.9

- Gemini-embedding-001: 68.4

- Amazon Nova 2: 63.8

- Voyage 3.5: 58.5

MTEB - Code Semantic Understanding:

For developers building RAG over technical documentation and codebases, the improvement is substantial:

- Gemini Embedding 2: 84.0

- Gemini-embedding-001: 76.0 (An 8-point jump)

TextCaps - Text-to-Image Retrieval:

When searching for images using text queries:

- Gemini Embedding 2: 89.6

- Voyage 3.5: 79.4

- Amazon Nova 2: 76.0 (A massive 13.6-point lead)

TextCaps - Image-to-Text Retrieval:

- Gemini Embedding 2: 97.4

- Amazon Nova 2: 88.9

- Voyage 3.5: 88.6

Real-World Impact: Everlaw and Sparkonomy

The theoretical advantages of this model are already translating into tangible business value for early adopters.

Everlaw, a leading legal tech platform, is utilizing Gemini Embedding 2 for e-discovery—a high-stakes environment where a missed document can cost millions. CTO Max Christoff noted that the model "significantly improved search precision and recall when processing millions of records," specifically highlighting its ability to unlock powerful search capabilities across previously siloed image and video evidence files.

Sparkonomy has seen even more dramatic architectural benefits. Co-founder Guneet Singh stated that by leveraging the native multimodal capabilities of Gemini Embedding 2, they completely eliminated the need for a separate LLM inference step in their pipeline. The result? A staggering 70% reduction in latency and a doubling of their text-image/text-video semantic similarity scores (from 0.4 to 0.8).

The Epsilla Perspective: Orchestrating Multimodal Agentic RAG

Google’s release proves our core thesis: the future of RAG is a single, unified, multimodal vector space. But generating a powerful 3072-dimensional vector is only the first step. To derive actual business value, you need to store, index, retrieve, and act upon those vectors at scale.

This is exactly what Epsilla is built for.

We are the enterprise orchestration layer designed to handle the massive scale and complexity of multimodal data. While Google provides the embedding engine, Epsilla provides the "Agent-as-a-Service" infrastructure that makes it usable in production.

- Sub-Millisecond Retrieval: Our purpose-built distributed vector database architecture is optimized to ingest and index these massive 3072-dimensional Gemini vectors, ensuring sub-millisecond latency for complex multimodal queries.

- Agentic Workflows: Epsilla isn't just a database; it's a workflow engine. We allow you to seamlessly plug Gemini Embedding 2 into sophisticated Agentic pipelines, routing retrieved data to specialized models for decision-making and action execution.

- Enterprise Security: We provide the necessary RBAC, audit logging, and data governance controls to deploy these multimodal agents in highly regulated environments safely.

Gemini Embedding 2 solved the fragmentation of data. Epsilla solves the fragmentation of execution. Together, they represent the new standard for Enterprise AI.