The current performance ceiling for Agentic AI is no longer just reasoning capability; it is the fundamental limitation of long-term memory. As agents interact over longer horizons, simply shoving endless conversational histories into an expanding context window creates "noise toxicity" (the Lost in the Middle phenomenon), diluting the signal and skyrocketing computational costs.

A joint research team from UIUC, Tsinghua University, and Microsoft Research recently published a paper introducing PlugMem: A Task-Agnostic Plugin Memory Module for LLM Agents. This framework proposes a radical solution: decoupling "reasoning" from "memory storage" by building an efficient, external "L2 Cache" outside of the core LLM "CPU."

Standardized Memory Tuples

In real-world applications, agent trajectories are messy and heterogeneous. PlugMem solves this by abstracting chaotic logs into standardized experience tuples, borrowing concepts from reinforcement learning: (Goal, State, Action, Reward, Next State).

These fragmented trajectories are then reconstructed into two distinct, compact semantic units:

- Propositions: Describing "what the facts are" (forming the bedrock of semantic memory).

- Prescriptions: Describing "how to execute a process" (depositing procedural, workflow knowledge).

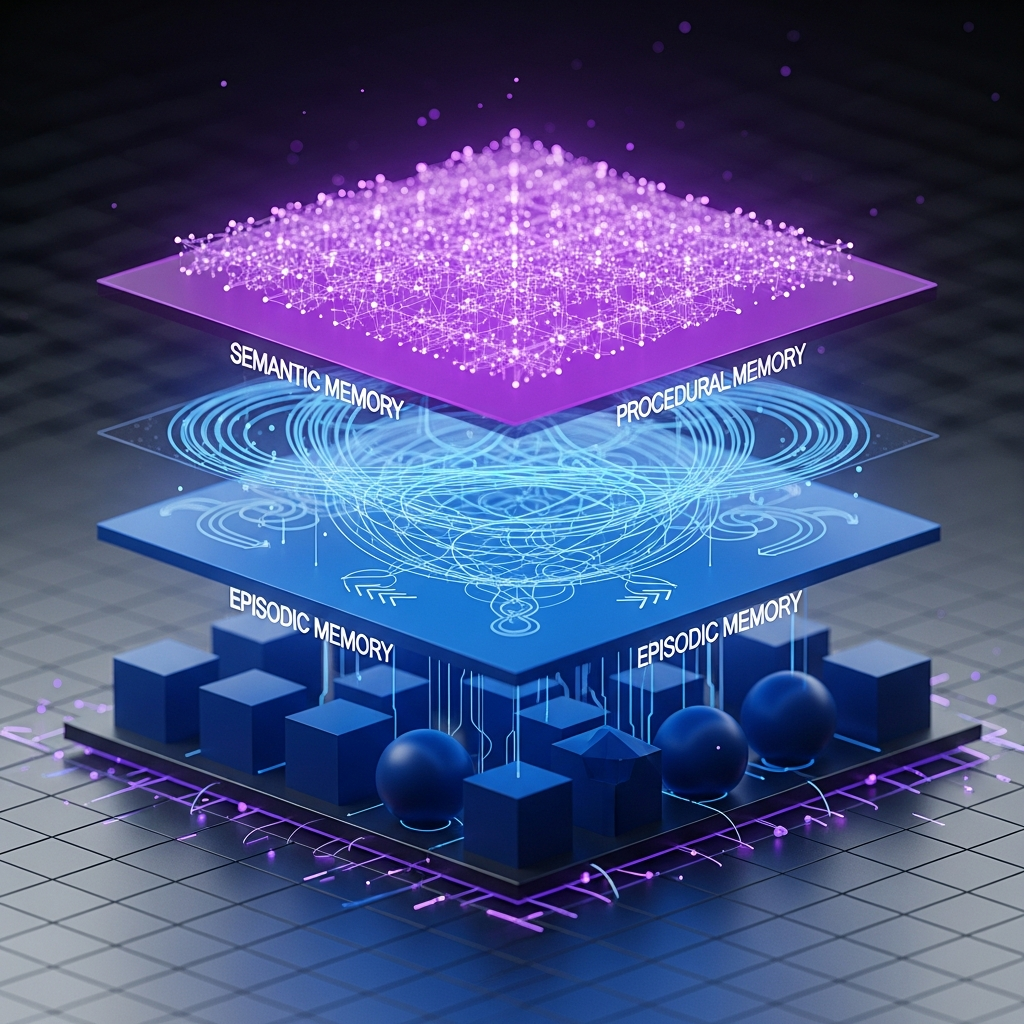

The Three-Layered Cognitive Model

To organize these units, PlugMem employs a three-tiered cognitive model that mirrors human memory:

- Semantic Memory: Stores "What it is" (facts, concepts, and long-term common sense).

- Procedural Memory: Stores "How to do it" (skills, actions, and workflows).

- Episodic Memory: Acts as the underlying anchor, storing "Short-term trajectories" (the raw sequences used for retrospective verification).

A Knowledge-Centric Graph Design

While traditional GraphRAG focuses heavily on entities, PlugMem organizes its memory as a Knowledge-Centric Graph. It uses Propositions and Prescriptions as its core units.

By utilizing lightweight "Concept/Intent" nodes as indices, the system points directly to high-density "Knowledge Payloads." This design maximizes memory density, compressing redundant noise and retaining only the essential, concentrated knowledge points.

From an information theory perspective, this achieves a massive utility-cost win. In the LongMemEval benchmark (testing 115,000 tokens of long-range interaction), PlugMem achieved a 75.1% high-precision extraction rate. It maintained peak decision-making utility while reducing token costs by 1 to 2 orders of magnitude—essentially trading fewer tokens for higher "IQ."

Furthermore, it proved its versatility:

- In HotpotQA, it utilized "hop retrieval" across the graph, preventing the agent from getting lost in massive text blocks.

- In WebArena, it successfully deposited procedural knowledge from human demonstrations, significantly improving task success rates.

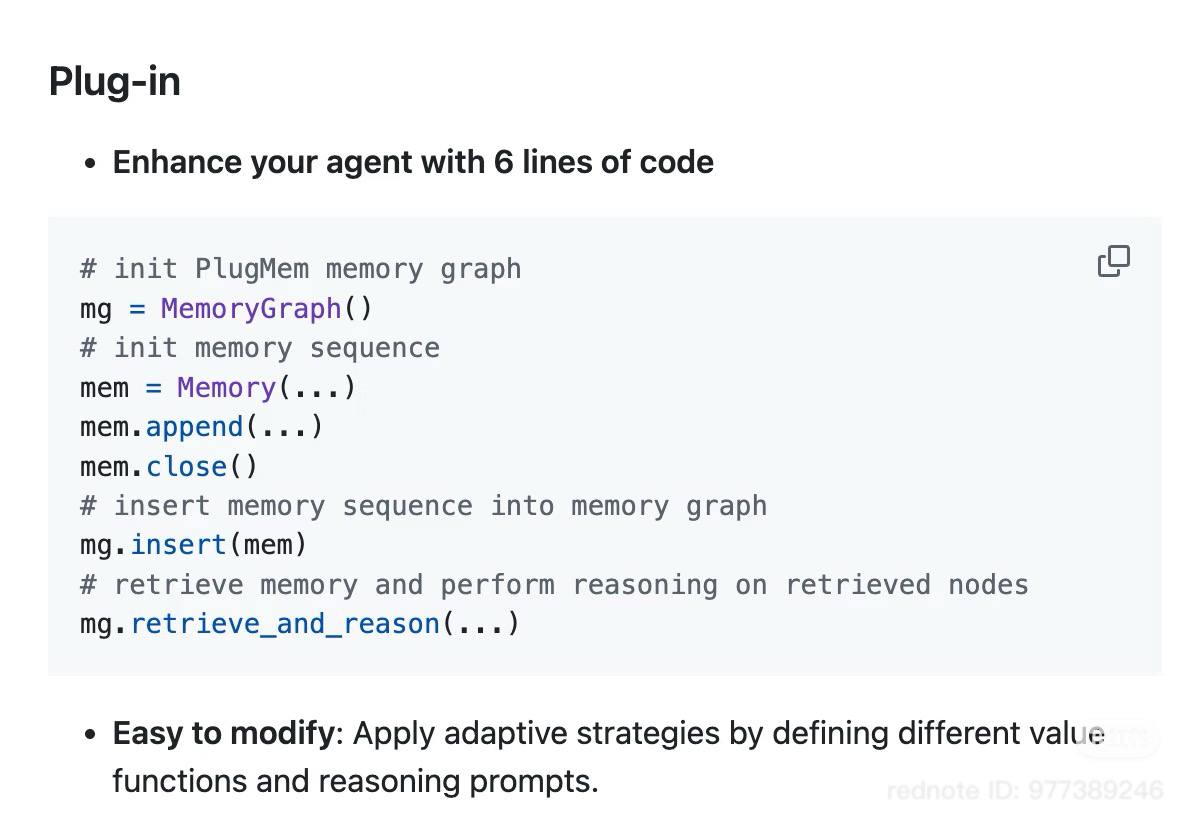

Integrating PlugMem: The 6-Line Implementation

Despite its complex cognitive architecture, PlugMem was designed to be a "plug-and-play" module that can be integrated into existing agent pipelines with minimal friction.

With just a few lines of code, developers can initialize a MemoryGraph, append a Memory sequence, insert it into the graph, and seamlessly execute retrieve_and_reason().

The Epsilla Perspective: The Architecture of Sovereign Memory

The PlugMem research deeply aligns with a reality we see every day at Epsilla: relying on context windows for enterprise memory is an architectural dead end.

PlugMem's brilliant realization is that raw interaction history is toxic. To scale agents in the enterprise, you must distill raw interactions into dense, reusable knowledge units (Propositions and Prescriptions).

However, for a Fortune 500 company, this "L2 Cache" cannot simply exist in a vacuum. It must be sovereign, secure, and deeply integrated into the company's existing data lakes and compliance frameworks.

This is where Epsilla bridges the gap between academic frameworks and enterprise deployment. While PlugMem proves the theoretical superiority of a three-tiered, graph-based memory module, Epsilla provides the actual enterprise-grade infrastructure to host it. By providing a scalable, secure Knowledge Retrieval backbone, we allow enterprises to build these exact types of high-density, multi-layered memory graphs.

When you decouple memory from the LLM, you are no longer renting your organization's intelligence from a foundation model provider. You are building a proprietary, sovereign cognitive asset that compounds in value over time. That is the true promise of Agentic AI.